Computer technology is adding tools, toys and gadgets of all kinds to our lives, but also is creating complex questions about how society uses technology. Personal privacy, business regulation, national security, economic competitiveness, international relations and social justice are all being cast in a new light as information changes hands with greater speed and in greater quantities.

As Ed Felten, professor of computer science, points out, neither technologists nor policymakers are equipped to deal with these issues on their own. The pace and impact of the changes demand that people in these two traditionally isolated areas take notice of each other and begin working together.

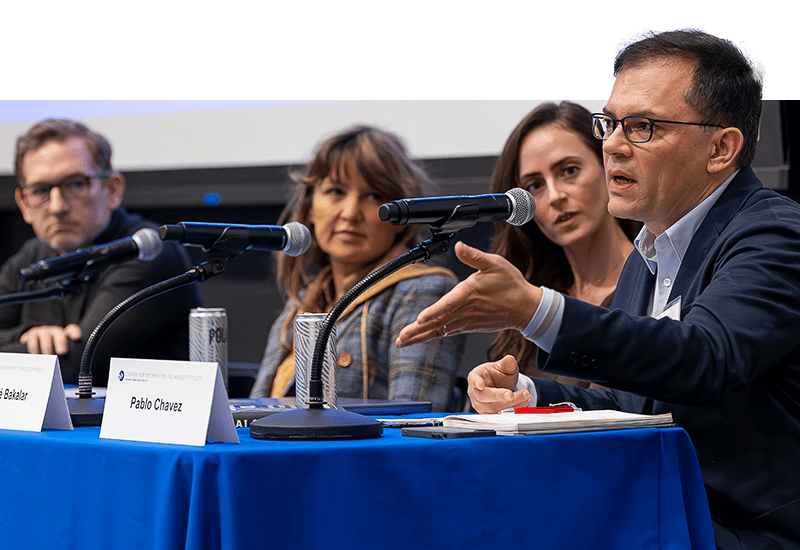

To address these issues, Princeton University is creating the Center for Information Technology Policy and has appointed Felten as founding director (see full story). Felten, who joined the Princeton faculty in 1993, has published extensively about Web browser design and computer security. He also has become a major voice in debates over computer privacy and digital copyright protection. The EQuad News recently spoke with Felten about issues he sees emerging at the nexus of information technology and policy.

EQN: Why is it necessary to make a special effort to address these issues now?

Felten: To start with, there is the increasing pervasiveness of digital technology in all parts of life and the economy. Society is undergoing a transformation that is increasing in scope and pace, and because of that, public policy decisions are getting more complex. There is this phenomenon that people call convergence: Rather than having different kinds of devices-telephone, television, radio, notebook and so on-there is a movement toward a smaller number of devices that do more things. The personal computer is one example; the cell phone is another. So where you used to have separate regulations for these different devices-one regulatory regime for telephones, one for radios, one for data networks-when you have convergence you now find all kinds of new questions. How will the telephone regula- tions get applied to computers? There’s a big fight going on right now: How will broadcasting regulations get applied to computers as computers become able to receive broadcasts directly off the air and online distribution starts to replace at least some broadcasting?

You also see this phenomenon in automated trading markets. Stock exchanges are getting computerized and that means that things that used to get done by people yelling at each other on the floor of the exchange are now being done by computer programs. And so to the extent that there was regulation of what those guys did before, that regulation naturally moves to the computer systems that replace trading pits. Securities regulation starts to control certain kinds of technology, and so there’s a sort of culture clash between the worlds of technologists and regulators. It used to be possible for technologists to go into the lab and do what they thought made sense technically. Now they seem to be much more aware that they need to clear what they’re doing with legal, they need to understand the policy situation. And you see big technology companies getting much more involved and engaged in the Washington policy process.Suddenly there are a lot more options for how technology can work and a lot of design choices. Some people see that as providing more opportunities to regulate, more opportunities to foster whatever outcomes or values they may want.

Don’t these questions work themselves out over time in the marketplace?

What is at stake?

There is a mismatch between the speed at which technology changes and the speed at which regulators adapt to technology, so you have lost opportunities. New products can’t exist because they’re illegal for reasons that no longer make sense, or people get away with doing harmful things because the regulators haven’t caught up‚Ķ

Wait, I can imagine the latter, but can you give an example of the former?

Let’s see, here is an argument that not everyone agrees with, but one example has to do with indecency in broadcasting. There used to be rules that during certain hours of the day programming had to be kid-friendly. But two things have changed in recent years. One is technologies like the V-chip that let parents block certain shows and channels. The other is the rise of all kinds of technologies where people record things at one time and watch them at another, which means that restricting the broadcast of something at a particular time doesn’t necessarily have the efficacy that it used to have. So these technology changes have weakened the case for government zoning TV.

What other opportunities might be lost?

I think economic competitiveness will be an increasing issue in this area. There is certainly the risk of overregulating or regulating poorly, and if you do that it’s definitely an economic drag. It’s a drag on productivity and growth of technology. I think people have an increasing sense that the U.S. is not automatically the leader in technology and that we have to keep earning that leadership. The policy choices that are being made affect competitiveness. It’s also an issue that these technologies tend to break down borders; they tend to allow organizations and groups to operate across borders, so there’s an increased need for international cooperation as well in talking about all of these positions.

One of the subject areas you are thinking of addressing in the new center is the proliferation of spam e-mail. Is spam more than just an annoyance?

The cost of spam is higher than just the annoyance. People trust e-mails a lot less because they get so much junk. In the wake of Hurricane Katrina, people sent out fraudulent e-mails that appeared to be fund-raising. When you get a request from the actual Red Cross, you’re more likely to throw it away because of worry that it’s fraudulent. There’s also the related issue of phishing, which is people sending fraudulent e-mails to draw the recipient into revealing passwords and so on. Many online merchants and banks have given up on using e-mail to get messages to their customers because there are so many fake messages. Also, spam will spread from e-mail to other communication technologies. You’ll see more spam in the phone system. You thought telemarketing was bad before? There’s been a lot of success in regulating telemarketing with do-not-call lists, but there’s a real concern that the list will break down as the cost of calling internationally gets lower and as the phone system moves away from the centralized control of a few large companies toward more open and Internet-based architecture. The nightmare scenario is that the phone system breaks down in a flood of spam-like telemarketing, and it’s a plausible outcome. You better be thinking about it because if spammers can make the switch to the phone system and instead of e-mails you get 150 calls a day, that’s pretty bad. In designing regulations, you always have to be concerned whether you can enforce them. For example, the FCC considered making a do-not-e-mail list just like the do-not-call list, and I worked with them as a consultant on that. They decided, I think correctly, that it didn’t make sense to try to do that because it would be less effective and there would be greater privacy risks.

There’s a technological arms race between filter designers and the spammers, and one of the things we can do with our technical knowledge is look at that arms race and predict where it’s going.

What can Princeton contribute to this overall set of issues?

The tendency has been that people who know a lot about technology are generally not so involved in the policy process. And many of the people who are involved in policy are trained in law or trained in things other than technology and often don’t have access to the technical nuances that matter. One of the big services we can provide is to participate in policy discussions from a technically knowledgeable viewpoint. We can be in the middle and look at both the policy questions and the technology issues in a more sophisticated way. We also can educate a new generation of students ‚Äì whether humanities majors, scientists or engineers-who have some understanding of each other’s fields and who go on to become leaders who are prepared to anticipate problems before they emerge.