Some allow cars to peer through dense fog and heavy snow, while others glance around corners for hidden objects or pedestrians. Another is the size of a grain of salt.

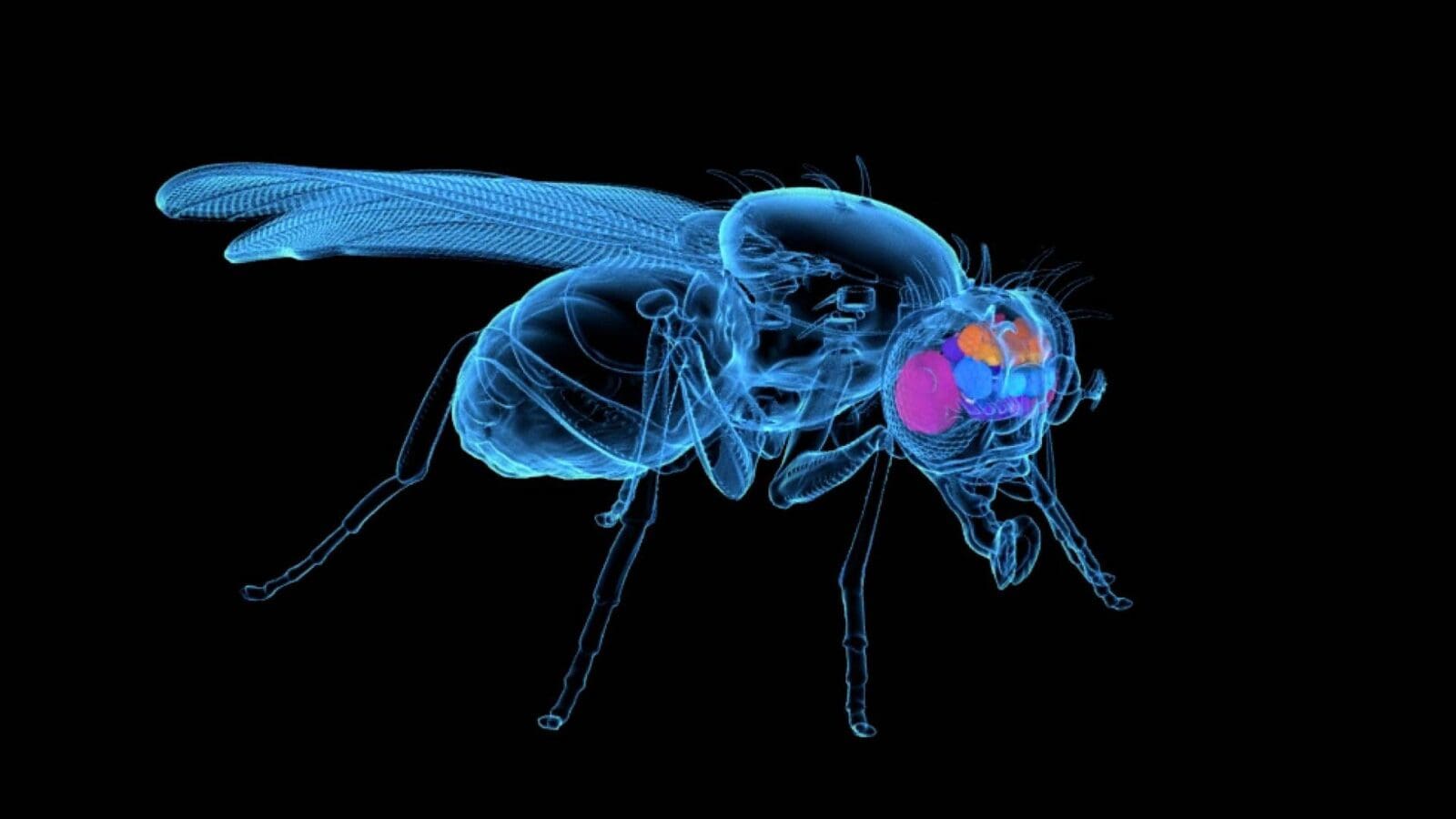

Heide has accomplished these feats by closely integrating hardware and software, aiming to optimize cameras for specific purposes. His group’s research in computational imaging could advance real-time sensing and object detection capabilities for personal devices, medical imaging, robots, and autonomous vehicles.

“We are interested in thinking about codesign of the optics and the algorithms together,” said Heide, an assistant professor of computer science. “We want to build domain-specific camera systems: the best camera for object detection, or low-light imaging, or scene understanding, or medical imaging.”

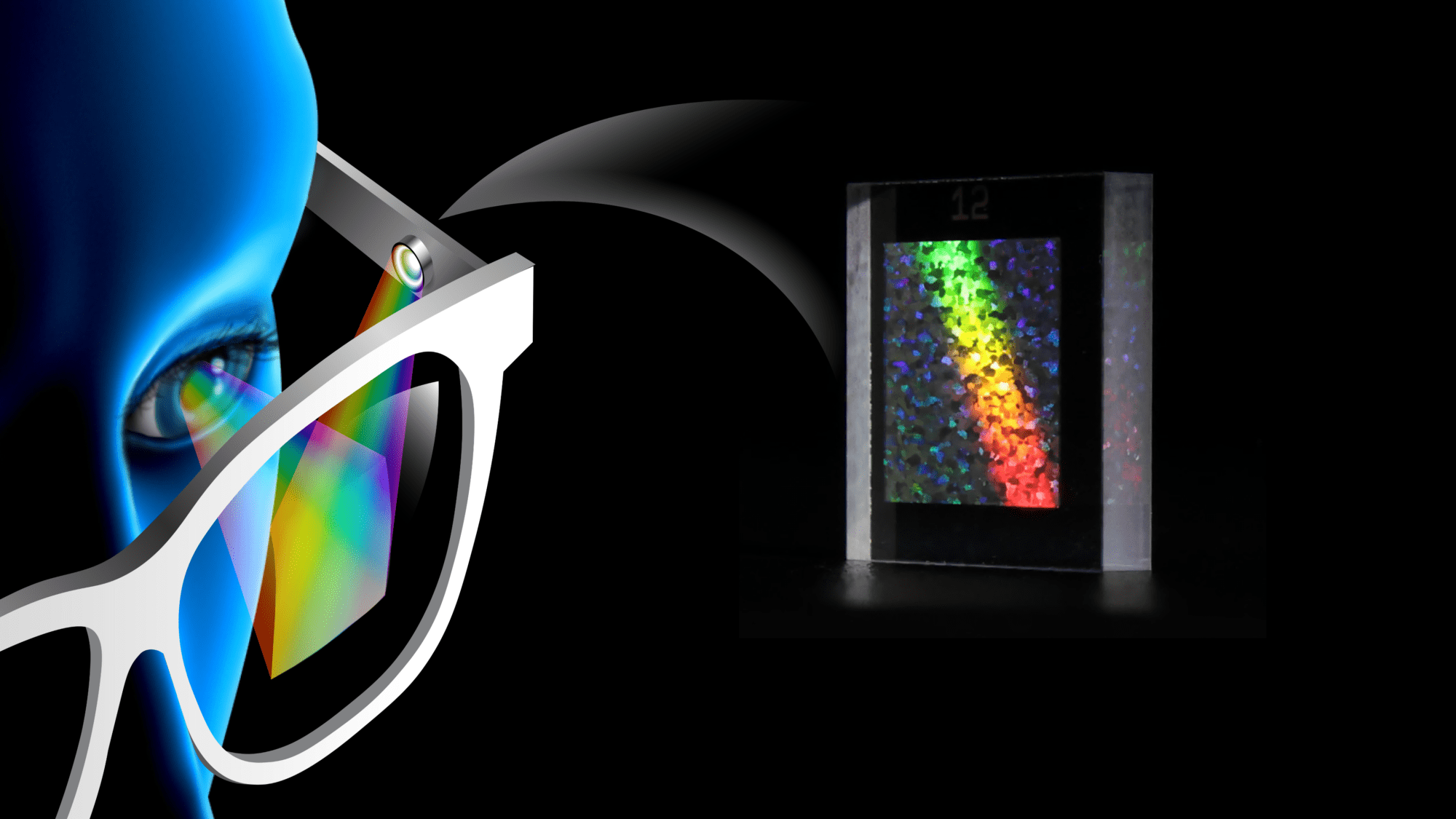

One of the imaging systems that Heide focuses on is vision technology for self-driving cars. His group has developed an automated system that uses radar to allow cars to peer around corners and spot oncoming traffic and pedestrians. And with a new grant from Princeton’s Metropolis Project, they are working on systems for navigation in adverse weather.

Existing systems for driverless cars rely heavily on lidar, which uses laser beams to detect objects in the environment. Lidar and other current imaging systems perform poorly in snow, fog, and rain, and at distances beyond about 1,000 feet (300 meters), which is essential for the safety of trucking. This is a major hurdle to their wide adoption, especially for transportation into cities from outlying areas, which will have less support infrastructure for driverless vehicles.

Using a test vehicle from Mercedes-Benz, a partner on the project, Heide’s team is collecting data from sensors during varied weather conditions in Princeton and New York City, and creating physical simulations based on this data. Using machine learning and optimization methods, they will develop systems enabling suites of sensors to dynamically adapt their activities based on changing conditions.