Cutting-edge digital chips boast circuit features at the scale of a virus, and still cannot keep pace with the accelerating computational demands of AI.

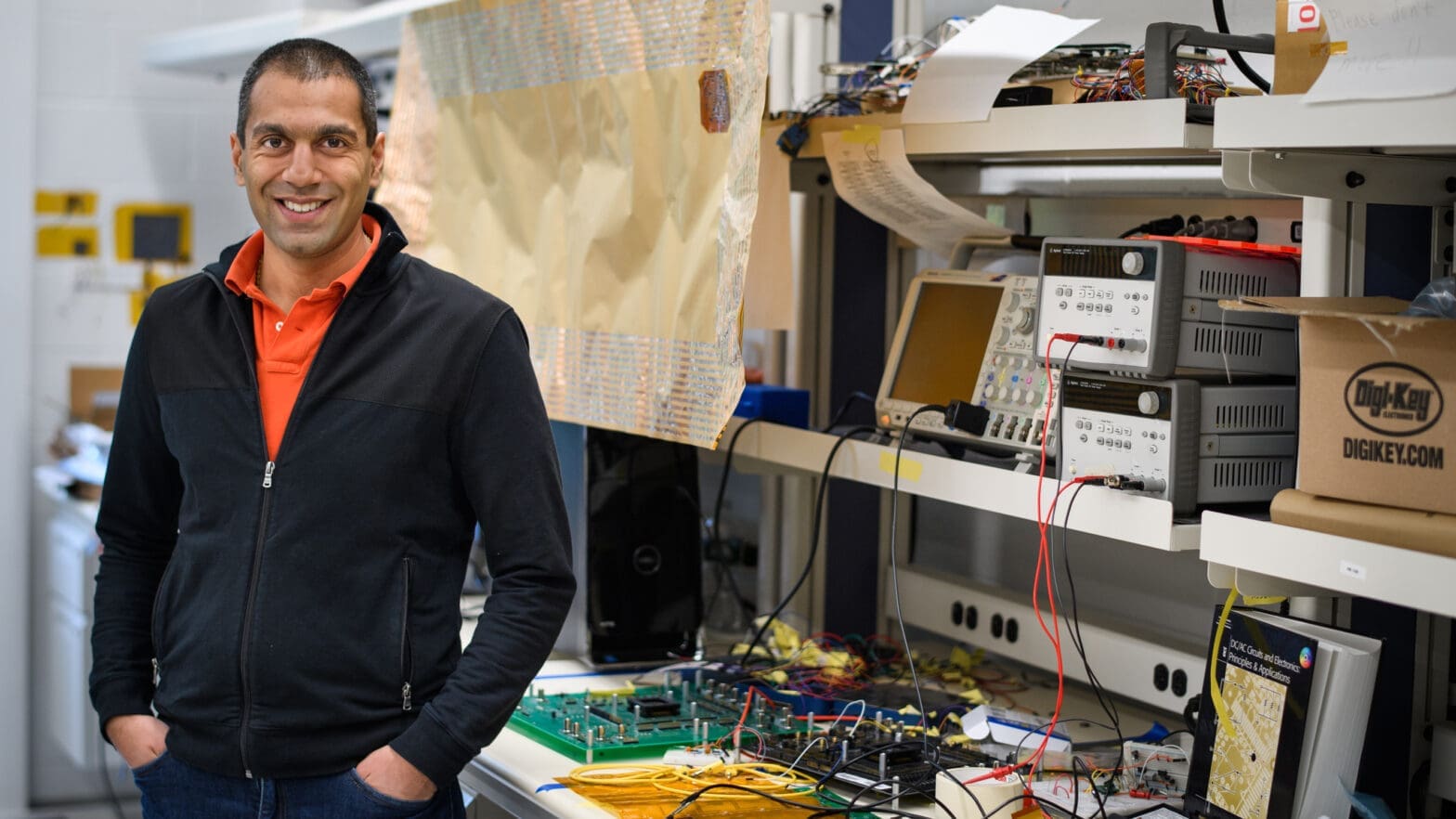

Verma, a professor of electrical and computer engineering, has developed a new kind of chip based on five key inventions from his lab. It promises a step change in AI hardware — 10 times more computational power per square millimeter and 10 times more energy efficiency than today’s best chips.

The key lies in where and how all those trillions of mathematical operations are performed each second. To save time shuttling data between processor and memory, Verma found a way to compute directly in memory cells using analog signals, reimagining the physics of computation for modern workloads.

The Defense Advanced Research Projects Agency recently committed $18.2 million in funding to enable Verma and his colleagues to explore this technology’s potential to power AI in laptops, phones, and vehicles. The project could lead to another massive expansion of AI’s capabilities, especially in its broader use in safety-critical scenarios, from highways and hospitals to satellite orbits and beyond.

This article was adapted from a previously published story.