The number of connections between neurons virtually explodes in our first few years. After that the brain starts pruning away unused portions of this vast electrical network, slimming to roughly half the number by the time we reach adulthood. The over-provisioning of the toddler brain allows us to acquire language and develop fine motor skills. But what we don’t use, we lose.

Now this ebb and flow of biological complexity has inspired a team of researchers at Princeton to create a new model for artificial intelligence, creating programs that meet or surpass industry standards for accuracy using only a fraction of the energy. In a pair of papers published earlier this year, the researchers showed how to start with a simple design for an AI network, grow the network by adding artificial neurons and connections, then prune away unused portions leaving a lean but highly effective final product.

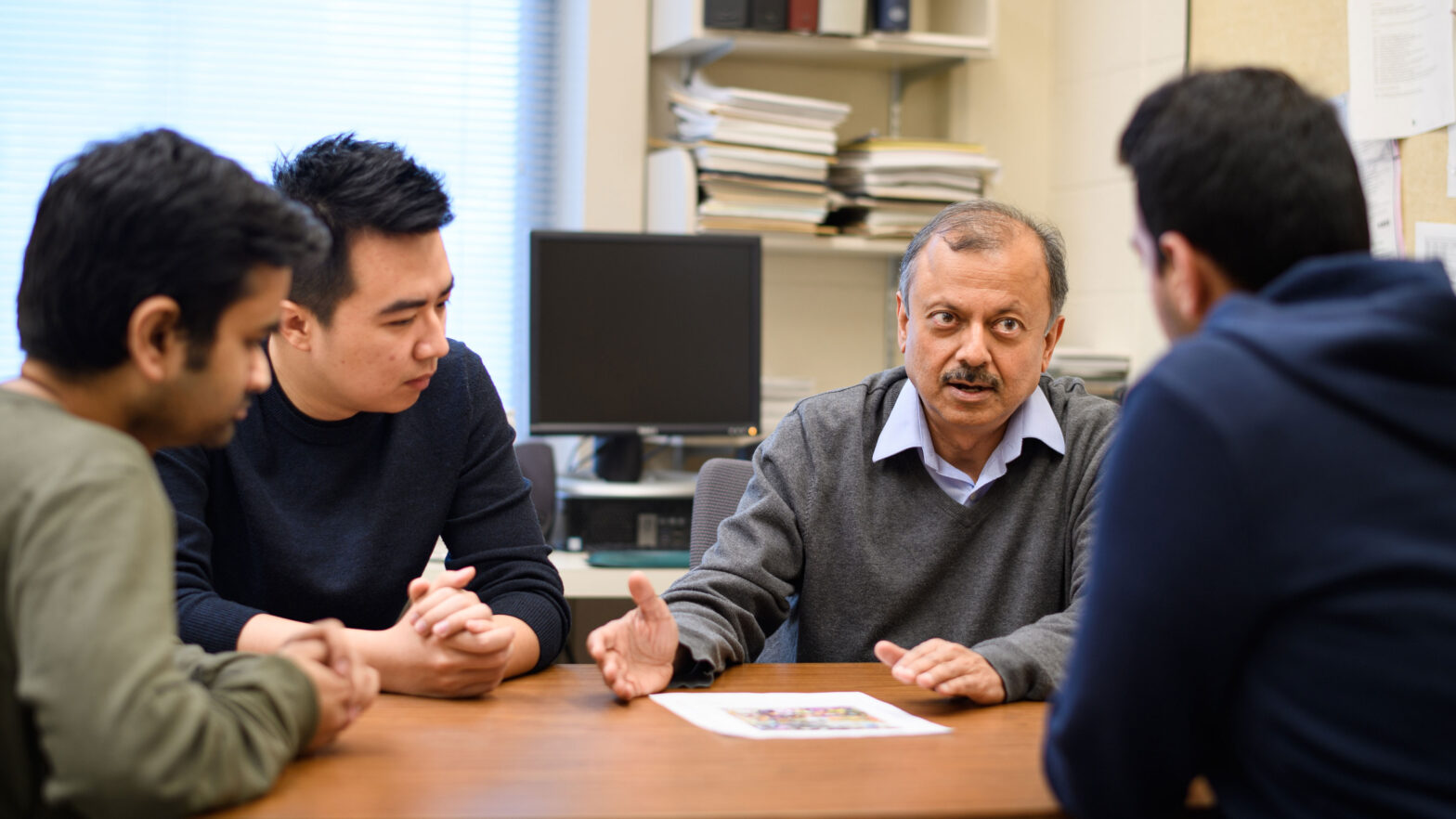

“Our approach is what we call a grow-and-prune paradigm,” said Professor of Electrical Engineering Niraj Jha. “It’s similar to what a brain does from when we are a baby to when we are a toddler.” In its third year, the human brain starts snipping away connections between brain cells. This process continues into adulthood, so that the fully developed brain operates at roughly half its synaptic peak.

“The adult brain is specialized to whatever training we’ve provided it,” Jha said. “It’s not as good for general-purpose learning as a toddler brain.”

Growing and pruning results in software that requires a fraction of the computational power, and so uses far less energy, to make equally good predictions about the world. Constraining energy use is critical in getting this kind of advanced AI – called machine learning – onto small devices like phones and watches.

“It is very important to run the machine learning models locally because transmission [to the cloud] takes lots of energy,” said Xiaoliang Dai, a former Princeton graduate student and first author on the two papers. Dai is now a research scientist at Facebook.

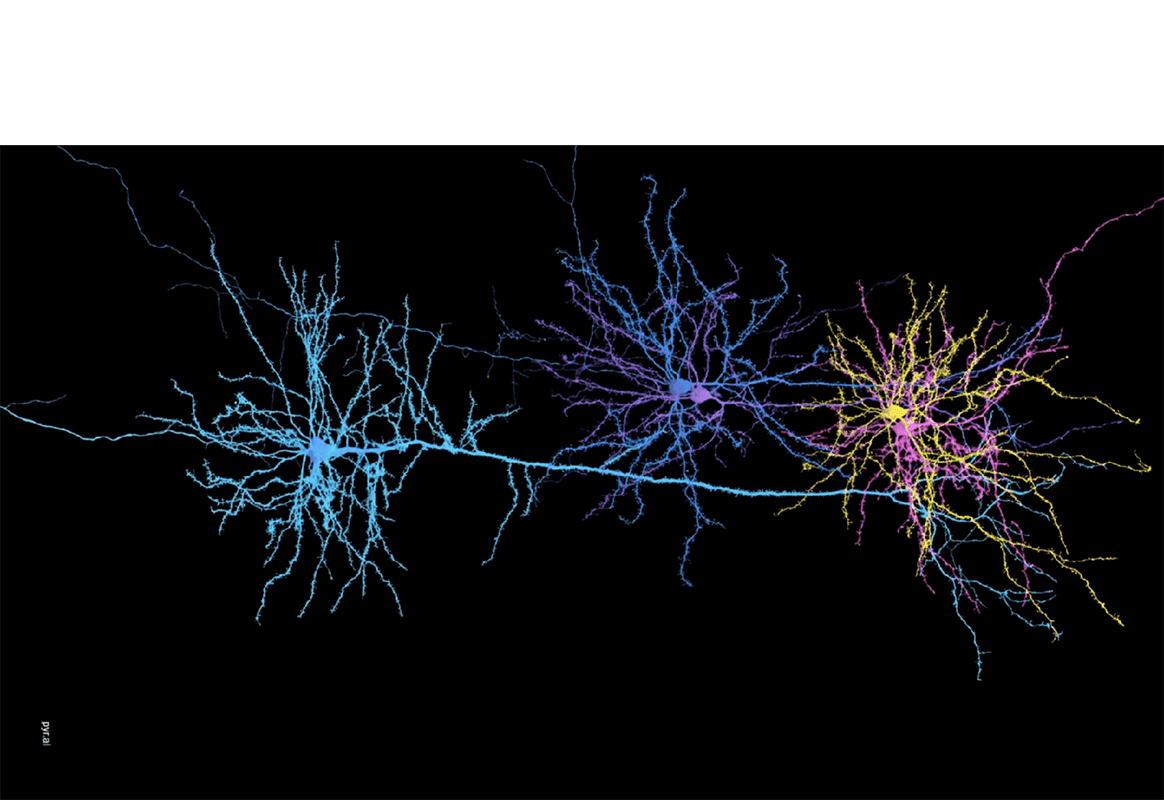

In the first study, the researchers re-examined the foundations of machine learning – the abstract code structures called artificial neural networks. Borrowing inspiration from early childhood development, the team designed a neural network synthesis tool (NeST) that re-created several top neural networks from scratch, automatically, using sophisticated mathematical models first developed in the 1980s.

In the first study, the researchers re-examined the foundations of machine learning – the abstract code structures called artificial neural networks. Borrowing inspiration from early childhood development, the team designed a neural network synthesis tool (NeST) that re-created several top neural networks from scratch, automatically, using sophisticated mathematical models first developed in the 1980s.

NeST starts with only a small number of artificial neurons and connections, increases in complexity by adding more neurons and connections to the network, and once it meets a given performance benchmark, begins narrowing with time and training. Previous researchers had employed similar pruning strategies, but the grow-and-prune combination – moving from the “baby brain” to the “toddler brain” and slimming toward the “adult brain” – represented a leap from old theory to novel demonstration.

The second paper, which includes collaborators at Facebook and the University of California-Berkeley, introduced a framework called Chameleon that starts with desired outcomes and works backward to find the right tool for the job. With hundreds of thousands of variations available in the particular facets of a design, engineers face a paradox of choice that goes well beyond human capacity. For example: The architecture for recommending movies does not look like the one that recognizes tumors. The system tuned for lung cancer looks different than one for cervical cancer. Dementia assistants might look different for women and men. And so on, ad infinitum.

Jha described Chameleon as steering engineers toward a favorable subset of designs. “It’s giving me a good neighborhood, and I can do it in CPU minutes,” Jha said, referring to a measure of computational process time. “So I can very quickly get the best architecture.” Rather than the whole sprawling metropolis, one only has to search a few streets.

Chameleon works by training and sampling a relatively small number of architectures representing a wide variety of options, then predicts those designs’ performance with a given set of conditions. Because it slashes upfront costs and works within lean platforms, the highly adaptive approach “could expand access to neural networks for research organizations that don’t currently have the resources to take advantage of this technology,” according to a blog post from Facebook.

In addition to Jha and Dai, Princeton graduate student Hongxu Yin contributed to both papers.