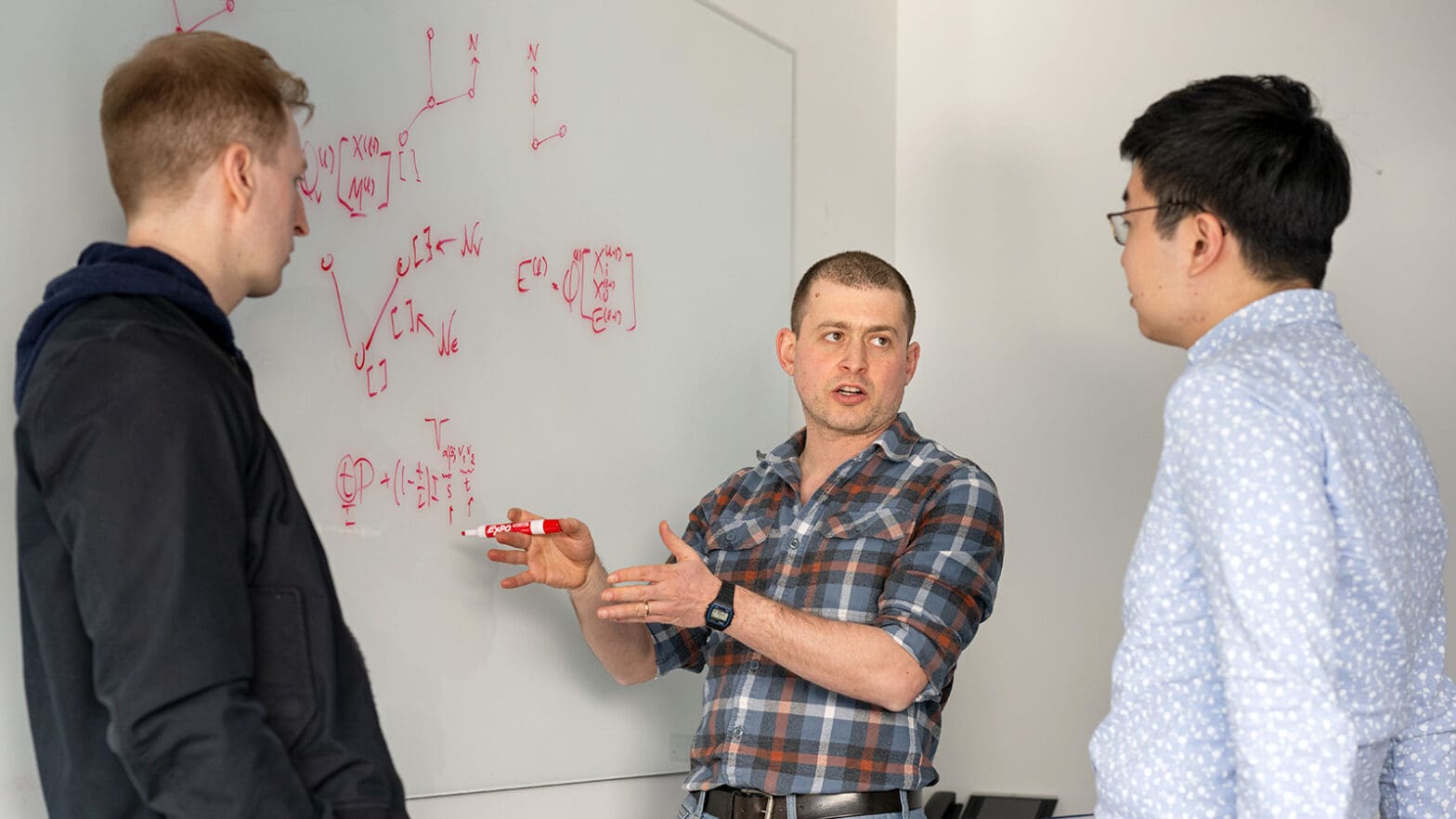

Boris Hanin, an assistant professor of operations research and financial engineering, is applying his background in mathematical physics — using math to develop theories about physical systems — to reveal a few simple rules that control neural networks, the massive algorithms that power much of today’s artificial intelligence.

The birth of the modern steam engine in the late 1700s was “just excellent innovators doing inspired engineering, tinkering in labs,” Hanin said. It wasn’t until later in the 1800s that scientists fully laid out the laws of thermodynamics, the theoretical framework that led to entirely new technologies.

“You can’t go from steam engine to jet engine without the notion of entropy,” Hanin said.

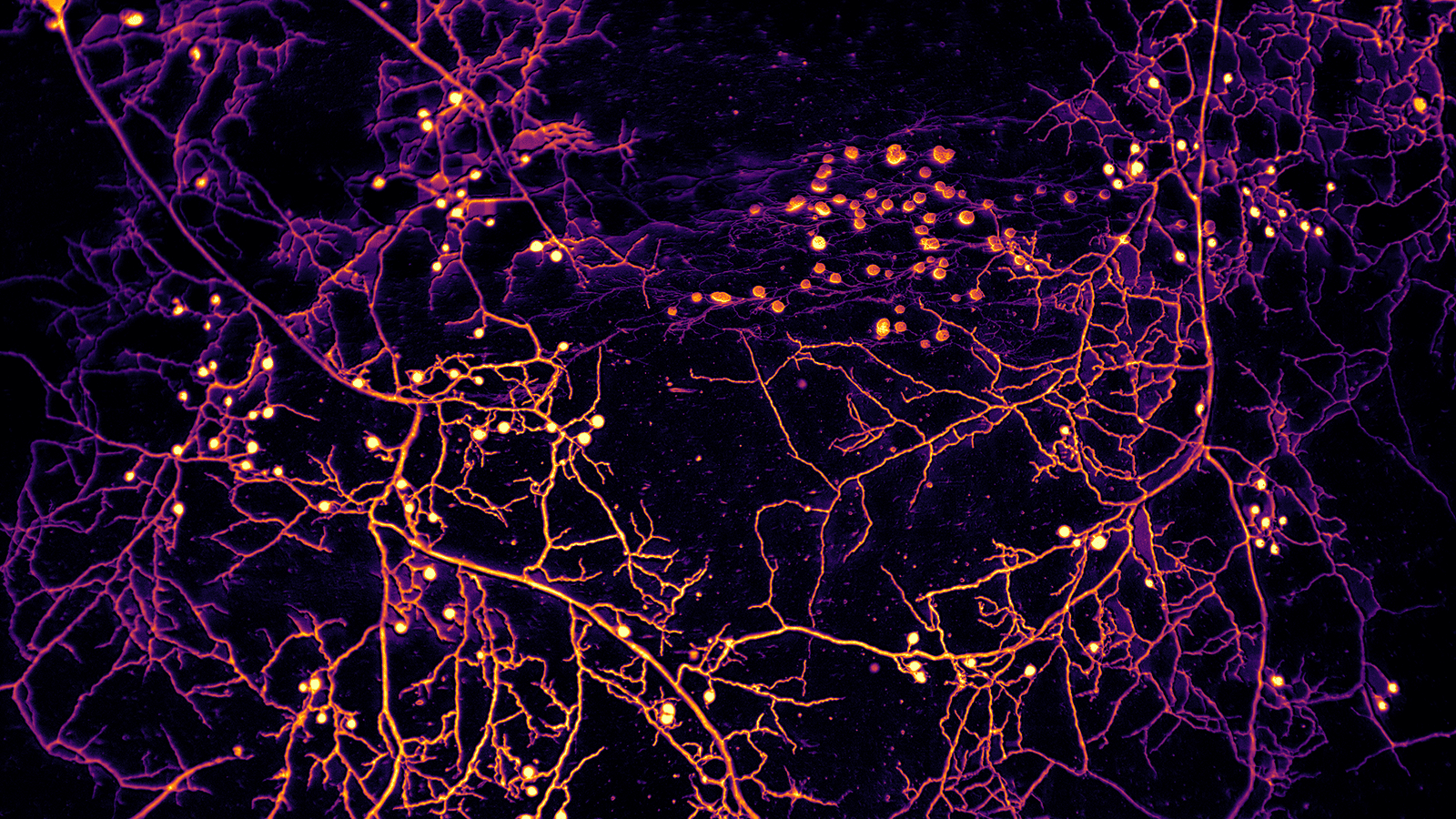

Even though they are artificial, human constructions, giant AI models are like complex physical systems, Hanin said.

“It’s actually quite possible to describe broadly the large-scale properties of neural networks in a similar way to how the Ideal Gas Law describes the large-scale properties of gases,” Hanin said. In fact, the size and complexity of neural networks is what gives Hanin confidence. Scientists can, for example, easily say how turning up a thermostat will increase air pressure in a room even though they can’t predict the individual behaviors of air molecules.

“That’s the law of large numbers. It’s the basis of all statistics,” said Hanin.

The implications of such insights for AI systems could be enormous. Large neural networks generally perform better than smaller ones, but only after careful tuning. The larger the network, the more expensive it is to do this tuning by trial and error. A clear theoretical understanding of key variables that affect design choices could save a lot of time and money, Hanin said. “Part of the reason it’s so costly to align large models is that we are still in the steam-engine phase of neural networks.”

In recent research papers, Hanin and colleagues have begun to uncover meaningful relationships between the nature of neural networks and the statistics of the data they are designed to learn and interpret. “Little by little, this understanding is being extended,” he said.