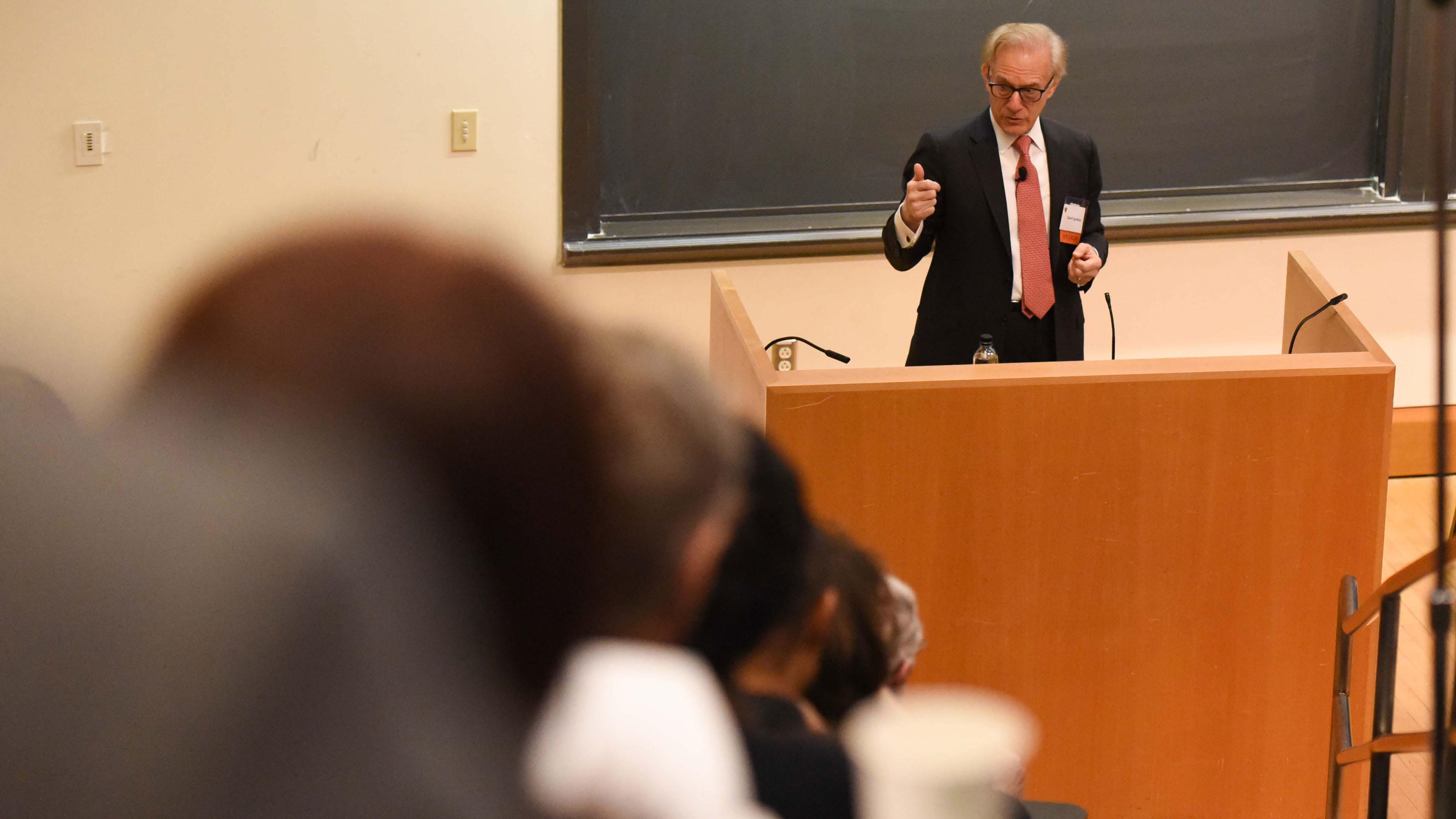

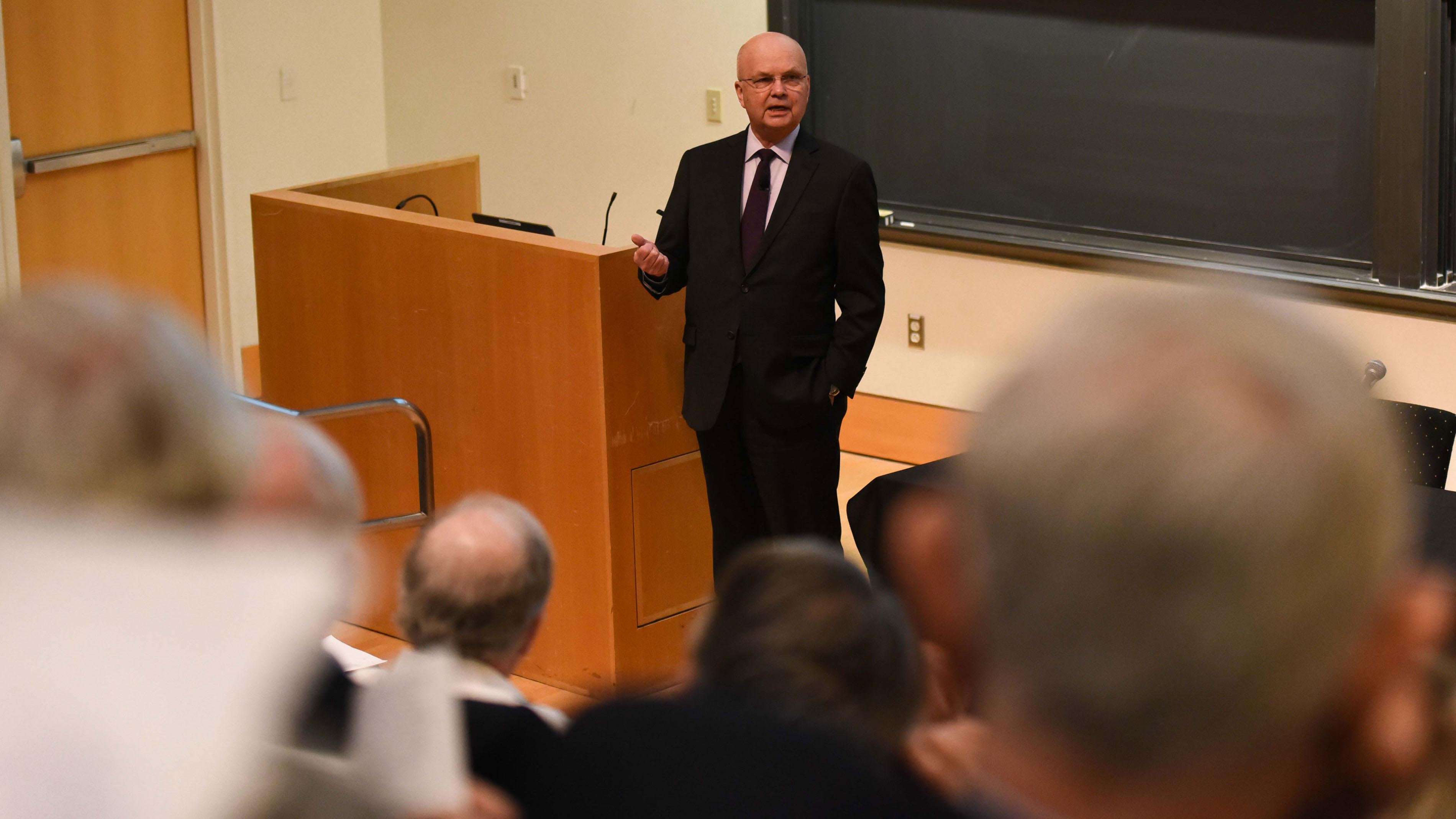

Although the cyberattacks during the 2016 presidential election came from abroad, “Fundamentally, the risk is internal,” said Gen. Michael V. Hayden, former director of the Central Intelligence Agency and National Security Agency. Russia, he said, has used our nearly limitless social media platforms to exploit our national divisions and the trend in American culture to rely more on emotion than fact.

“It should not be that easy for the Russians to do what they’re doing to us,” Hayden said.

Princeton hosted the April 7 forum “Defending Democracy: Civil and Military Responses to Weaponized Information.” The forum focused on rethinking the definition and scope of national defense to encompass cyberattacks and information warfare. It was the fourth annual Veterans Summit, hosted in previous years by Yale University and the U.S. Military Academy.

Speakers ranged across a wide array of disciplines, including military, computer science, legal, policy, journalism and social science. Mara Liasson, national political correspondent for National Public Radio, who moderated a panel, called the event “our exploration of dystopia,” and Washington Post foreign affairs columnist David Ignatius, who delivered opening remarks, called it the “Davos of disinformation.”

Speakers largely focused on campaign activities that have been traced back to Russia, including Facebook ads designed to reach voters in Michigan and Wisconsin, states that helped swing the election to Donald Trump; the theft and leak of embarrassing emails from Hillary Clinton’s campaign chairman and the Democratic National Committee; paid social media users, or “trolls,” spreading stories about Clinton’s controversies; and ads and accounts that amplify divisive social issues like gun rights, LGBT matters, race and immigration.

Panelists argued these attacks were targeted at undermining our very way of life, trying to destroy national unity by using strengths, such as a free press and an open society, to turn Americans against Americans.

Hayden noted that Russian bots helped fan the flames of outrage during the recent “take a knee” NFL controversy, stirring up both sides on the issue and helping it trend on social media.

Taken as a whole, the cyberattacks, Hayden said, have a lot in common with 9/11 – an attack from an unexpected direction, exploiting a previously unknown weakness. The nation rallied in response to the 2001 attacks in large part because President George W. Bush set the tone, he said.

“We gotta go extraordinary,” Hayden said about the cyberattacks. “We as a nation don’t go extraordinary unless the president says ‘do it,’” and so far, that hasn’t happened, he said.

Panelists, including those from the event’s co-hosts, the Woodrow Wilson School of Public and International Affairs and the School of Engineering and Applied Science, spoke of how our society is now more vulnerable to manipulation by foreign adversaries. The Princeton Veterans Alumni Association also co-hosted the event, which was organized by staff of the Wilson School.

There’s a new vicious cycle in which social media and social disagreements feed upon each other, said Edward Felten, a Princeton professor of computer science and public affairs and director of the Center for Information Technology Policy (CITP). Felten called this a “feedback loop” between people and technology, in which algorithms determine what people see, and when people consume that content, it reinforces the algorithm. This, he said, leads to the “viral explosion” of some content.

“We know a lot about what happened in the 2016 election cycle,” Felten said. “But what we can do about it is a more difficult question.”

YouTube has an economic incentive to show more ads by keeping people on their platform for a longer period of time, said Zeynep Tufekci, an associate professor at the School of Information and Library Science at the University of North Carolina-Chapel Hill.

After people see the video they came looking for, such as a Trump rally, she said they are often guided to another video with a more extreme viewpoint, like a white supremacist rally – a phenomenon that Hayden, in his own speech, likened to the addictive empty calories of Doritos.

After people see the video they came looking for, such as a Trump rally, she said they are often guided to another video with a more extreme viewpoint, like a white supremacist rally – a phenomenon that Hayden, in his own speech, likened to the addictive empty calories of Doritos.

“The algorithm is feeding a certain kind of bias into peoples’ information stream,” Tufekci said.

Tufekci, a former CITP fellow, noted Facebook chief executive Mark Zuckerberg will be appearing before Congress on Tuesday to discuss a range of issues, from the disclosure of users’ personal data to the Russian meddling in the election. There have been many occasions over the last few years when he has given heartfelt public apologies and vowed to do better, only to end up making mere cosmetic changes to Facebook, she said.

“I don’t really want to hear more apologies. I don’t even want to hear legislators yelling at them. I want them to do their job and give us protections from this business model and this way of collecting data on all of us,” Tufekci said.

While there was general agreement about defining the problem, panelists disagreed about whether the best solution was legislative, self-correction by industry, deterrence and countermeasures, or some combination of those.

Rand Waltzman, a senior information specialist at the RAND Corporation, suggested creating a new, competing international social media platform that is independent, transparent and open to inspection.

Adm. Cecil Haney, who commanded the U.S. Strategic Command from 2013-16, recalled the Cold War days when school children were instructed to duck and cover under tables. While not a terribly effective way to survive a nuclear attack, it was an indication that the country had organized itself right down to the school level, he said. The United States has so far failed to organize against weaponized information, he said.

If it takes labeling this the second Cold War to kickstart that organization drive, “then make it so,” Haney said.

Panelists on the topic of deterrence said it was important for the nation to identify where its “red lines” are, and publicly state under which scenarios it would respond against foreign adversaries.

“You need some doctrine,” said Jacob Shapiro, a professor of politics and international affairs at Princeton’s Woodrow Wilson School.

In a Princeton interview, Shapiro said platform operators need to be able to cooperate to identify hostile foreign organizations, and share information with each other to ban them from posting content. “You’re not restricting speech, but what you’re saying is that on certain platforms, there are certain entities that we’re just not going to allow to operate there.”

Shapiro, who was interviewed on Facebook Live the day of the event, said that industry has a strong incentive to get this problem under control. While platform operators have a short-run need to maximize ad revenue, they risk losing the trust of society in their systems, he said. Social media companies are spending increasingly more on content controls and internal governance, he said.

“They’re doing that because they recognize a long-run threat to their business model,” Shapiro said. “The Wild West of the last few years, I suspect, will go away over time just because the profit incentive for the companies over the long run demands that they achieve better control.”

Laura Rosenberger, director of the Alliance for Securing Democracy, endorsed a public information campaign aimed at “the new stranger danger” online.

Panelists and speakers lamented the power that social media has gained over peoples’ worldviews. Social media had once been seen as a great leveler of society, moving the power from institutions to the people, said Ignatius.

Newspapers were designed to help organize peoples’ worlds, but people have come to resent that filter, and the effect of social media has been to remove it, he said. The news business was designed to challenge peoples’ opinions, but these days, “people want their opinion confirmed. ‚Ķ They want to make you comfortable,” Ignatius said of social media platforms.

Ignatius spoke of false information as a tool used by British spies during World War II, to combat isolationist tendencies in the United States. Although it was done in the spirit of protecting democracy, it was “animated by a spirit of mischief” that has since been taken up by more nefarious actors.

Russia did not invent misinformation during the 2016 presidential campaign, Ignatius said. But weaponized information has become much more effective and toxic during this period, he said. Americans spend a ton of time on social media, and Facebook and YouTube in particular, making them vulnerable to this kind of attack, he said.

People have gotten addicted to free news, which is often “yellow journalism,” said Matt Chessen, a career U.S. diplomat. Real news online usually requires a subscription, he said. “Truth is not incentivized properly online. ‚Ķ Social media makes disinformation easy to disseminate.”

Clint Watts, a Robert A. Fox Fellow in the Foreign Policy Research Institute’s Program on the Middle East, said Americans go to social media to find happiness and organize their world, only to come away miserable. The Russians knew that older Americans are more likely to vote, but were less likely to be sophisticated enough to spot a scam on Facebook.

“We don’t like to admit that our number one enemy is us,” Watts said.