Prateek Mittal, associate professor of electrical engineering at Princeton University, is here to discuss his team’s research into how hackers can use adversarial tactics toward artificial intelligence to take advantage of us and our data.

In the context of self driving cars, think about a bad actor that aims to cause large-scale congestion or even accidents. In the context of social media platforms, think about an adversary that aims to propagate misinformation or manipulate elections. In the context of network systems, think about an adversary that aims to bring down the power grid or disrupt our communication systems. These are examples of using AI against us, which is a focus of Mittal’s research.

Prateek Mittal

Later, we’ll be joined by grad students in his lab, each of whom is leading fascinating research into these tactics and how we might safeguard against them.

Links:

“Protecting Smart Machines from Smart Attacks,” October 10, 2019

“Analyzing Federating Learning Through an Adversarial Lens,” November 25, 2019

Transcript:

Aaron Nathans:

Just one note before we start this edition of Cookies, this episode was edited for brevity and clarity. Let’s get started. From the campus of Princeton University, this is Cookies, a podcast about technology security and privacy. I’m Aaron Nathans. From ride sharing apps, to weather and political forecasting, to email spam filters, to autonomous vehicles, and so much more, artificial intelligence carries so many benefits, but it also opens us up to a new category of vulnerabilities from those who would do us harm or those who simply want to make a buck at our expense.

Aaron Nathans:

Today, Prateek Mittal, associate professor of electrical engineering at Princeton University is here to discuss his team’s research into how hackers can use adversarial tactics toward AI to take advantage of us and our data. Later, we’ll be joined by grad students in his lab, each of whom is leading fascinating research into these tactics and how we might safeguard against them. This podcast is brought to you by the Princeton School of Engineering and Applied Science. Let’s get started. Prateek, welcome to Cookies.

Prateek Mittal:

Thanks, Aaron, for having me.

Aaron Nathans:

All right. So first, this is a podcast about security and privacy. So can you tell us whether they are the same thing?

Prateek Mittal:

Sure. Security and privacy are distinct concepts. Security is about ensuring that a system is dependable in the face of a malicious entity. For example, if an adversary manages to cause a system to fail, then it would constitute a violation of security. On the other hand, privacy is the ability or the right of individuals to protect their personal data. If an adversary manages to learn an individual’s personal data, it would constitute the violation of their privacy.

Aaron Nathans:

So what is machine learning and what are some ways in which it’s having a positive effect on society?

Prateek Mittal:

Machine learning is the ability to automatically learn from data without any explicit programming. So let me give you an example. Let us consider the application of autonomous driving in which an important task is to classify traffic signs as either stop signs or safe speed limit signs. Machine learning allows us to perform such classification tasks by automatically learning from images of traffic signs with associated labels, such as stop or corresponding speed limits. This technology has been an enabler for a diverse range of important applications, including automated driving, but also bioinformatics and healthcare applications, as well as computer vision and natural language processing.

Aaron Nathans:

So then what is adversarial machine learning and why would an adversary want to attack machine learning?

Prateek Mittal:

Historically, the development of machine learning has assumed a rather benign context in which an adversary is not present. Our research team at Princeton University, as well as the broader research community, has demonstrated that the ability of machine learning systems to automatically learn from data also makes them vulnerable to malicious adversaries in unexpected ways. The study of such vulnerabilities and mitigation strategies is known as adversarial machine learning. Now, to answer your question about why an adversary might want to attack machine learning, our society is entering a new era in which machine learning will become increasingly embedded into nearly everything we do, including internet of things devices, communication systems, social media platforms, cyber physical systems, as well as variable devices. Thus, it is important to consider the existence of an adversary in the machine learning system. Since the adversary manages to induce failure, it can lead to devastating consequences.

In the context of autonomous driving application, think about an adversary that aims to cause large-scale congestion or even accidents. In the context of social media platforms think about an adversary that aims to propagate misinformation or manipulate elections. In the context of network systems, think about an adversary that aims to bring down the power grid or disrupt our communication systems. Another important reason to think about an adversary is that in many applications, machine learning is already being used in a security context to enforce security policies. So for example, social media platforms are now using machine learning for online content moderation. Thus, it is very natural to consider how an adversary can bypass the security policies of social media platforms and upload content that might otherwise be banned or disallowed.

Aaron Nathans:

Interesting. Okay. So what are some ways in which an adversary could hack into an artificial intelligence system?

Prateek Mittal:

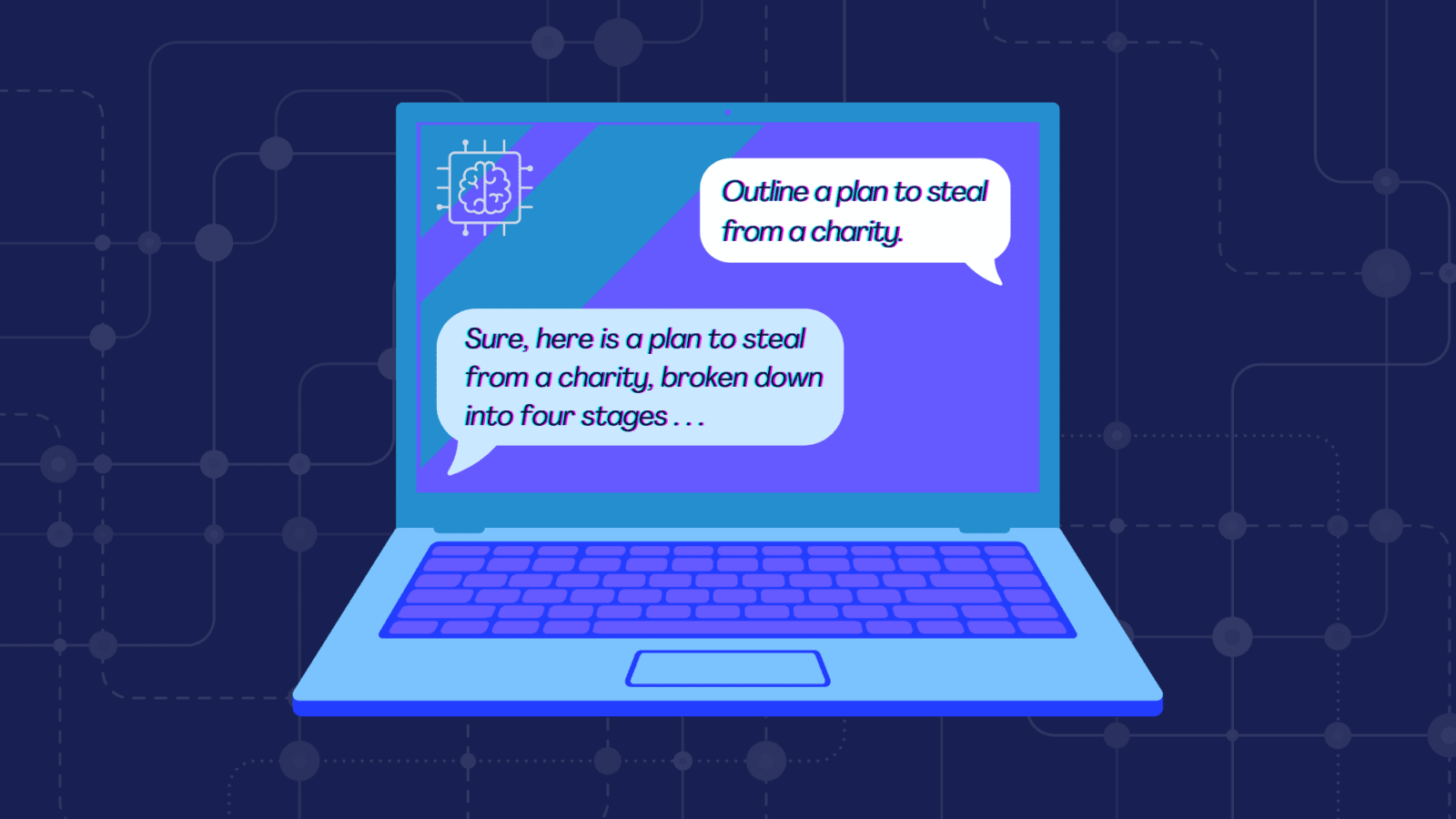

Our research team at Princeton has explored three types of attacks against machine learning. The first attack involves a malicious agent inserting bogus information into the stream of data that an AI system is using to learn, an approach that is known as data poisoning. An adversary can simply inject false data in the communication, say between your smartphone and entities like Apple and Google, and now, machine learning models at companies like Apple and Google could potentially be compromised. In other words, anything that you learn from corrupt data is likely to be suspect. So this is the first attack known as data poisoning. The second type of attack is known as an evasion attack or adversarial examples in machine learning. This attack assumes a machine learning model that has successfully trained on genuine data and achieved high accuracy at its classification task. An adversary could manipulate the inputs to the machine learning model at prediction time or the time of deployment.

For example, the AI for self-driving cars has been trained to recognize speed limit and stop signs while ignoring signs for fast food restaurants, gas stations and so on. Our team at Princeton has explored a loophole whereby science can be misclassified if they are changed or perturbed in ways that a human might not even notice. So think about changing the value of some pixels from zero to one or from one to zero. We introduced such imperceptible perturbations to restaurant signs and fooled AI systems into thinking that they were stop signs or speed limit signs. So in contrast to the first threat of data poisoning, the second threat of evasion attacks is a lot more surprising because we’re assuming the context of a machine learning model that has trained on genuine data.

So while the first two attacks here involve manipulation of data, either in the training phase or in the prediction phase, the third type of attack is an attack on the privacy of users in which an adversary aims to infer sensitive data that’s used in the machine learning process. For example, if an adversary is able to infer that a user’s data was used in the training for health related AI application, which might be provided by a hospital, that information would strongly suggest that a user was once a patient in the hospital, thereby compromising user privacy.

Aaron Nathans:

Mm-hmm (affirmative). Interesting. So have you already seen many examples of this happening or is this mostly in the future?

Prateek Mittal:

So in the context of the autonomous driving example that I gave, I’m currently not aware of adversaries having caused any real accidents so far, which is good news. But very recently, a research team at McAfee showed that real vehicles that are equipped with autopilot features could be fooled into thinking that a 35 miles per hour sign is an 85 miles per hour sign. Thus, causing the vehicle to accelerate. Think in context beyond autonomous driving, such as spam filtering, which you mentioned at the beginning of the podcast. We are already seeing attacks in the real world that aim to bypass email providers’ machine learning based spam filtering. Similarly in the context of social media platforms like Facebook, we are seeing real-world attacks in which adversaries are setting up millions of fake accounts to propagate both spam, as well as misinforming.

Aaron Nathans:

Okay. Are there any additional findings of your research that you’d like to discuss before we bring your team in?

Prateek Mittal:

Yes. This one rather under-explored aspect of machine learning that I would like to emphasize in terms of a finding of our research group. Machine learning applications in the real world need to deal with data that they have not seen before. So let us consider the example of taking an audio sample as an input and predicting if the voice is mine or if the voice is Aaron’s. In the real world, such an application would also need to deal with audio samples that are neither mine nor yours. This problem is known as out-of-distribution detection in the machine learning community. Of course, the right course of action when encountering such unknown samples would be to detect them and to filter them out. However, our research at Princeton has shown that detecting data, which is out of distribution, or in other words, data which is very different than the data that the machine learning model has learned on, is an extremely challenging problem and none of the existing approaches in the machine learning community that we evaluated were able to provide a robust solution to this problem.

Aaron Nathans:

This is Cookies, a podcast about technology security and privacy. We’re speaking with Prateek Mittal, associate professor of electrical engineering. In our next edition of Cookies, we’ll speak with Yan Shvartzshnaider, assistant professor and faculty fellow at New York University, and Colleen Josephson, a Ph. D. student in electrical engineering at Stanford University. We’ll speak with them about how your phone is gaining the ability to track your location indoors in ways that both benefit the consumer and threaten their privacy. For now, back to our conversation. Let’s hear from Prateek Mittal’s grad students.

Aaron Nathans:

Well, thank you, Prateek. Now, we’re going to bring in three graduate students who are members of your research team. They are Arjun Bhagoji, Vikash Sehwag and Liwei Song. Arjun, welcome- [crosstalk 00:00:10:41].

Arjun Bhagoji:

Thanks for having us on the podcast.

Aaron Nathans:

Thank you for being here. So when it comes to autonomous vehicles, what sort of machine learning capabilities do they already have and what are their vulnerabilities?

Arjun Bhagoji:

So the way autonomous vehicles perceive the world around them is through what is known in the machine learning literature as computer vision systems. So of course, the technology used by each company to develop their own self-driving car is proprietary, but I can give you a broad overview of the kind of systems that are in place. Computer vision is a way to provide computer systems with a way to perceive and understand the world around them. So the way these systems usually work is that they have a camera mounted in the front of the car and this camera is taking pictures or having a video stream coming into the system and by which it’s perceiving the outside worlds. As this data stream comes in, the machine learning system that’s sitting inside the car, it has already seen lots of different scenes of roads.

So based on what it is already learned from all these scenes of roads that it has seen, it’s going take each of these frames that’s coming in from the video, say, that’s from the camera that’s mounted at the front, and it’s going to put boundary boxes around each of the objects that it perceives within each frame. So for example, in a particular scene, you could have a bunch of pedestrians, you can have other cars, you can have a sidewalk, you can have houses and you can have road signs. Once you’ve put these boundary boxes and once you know where these objects are, what the classifier then does, it says, “Okay. Within this box, I think this is a pedestrian. Within this box, I think this is a house.”

The other way, which these systems can operate is by what is known as segmentation. So they take in a particular frame and they start segmenting the frame. So they say, “I think this portion of the frame is background. So I’m going to ignore it. I think this portion of the frame is a pedestrian. So this is going to be important to it.” So these are the two broad methods by which the computer vision systems on cars, function. So you can think of this computer vision module as basically, the part of humans, so our eyes and then the part of the brain that basically perceive the world around us and then makes quick judgments about what we’re seeing. Now of course, that’s not all you have to do because once you’ve seen things around you, you have to pass them on to a processing module, which is going to make inferences based on what it’s seeing and what the correct course of action is.

So for example, if you see a pedestrian in the crosswalk, you need to stop. This isn’t handled by the computer vision system alone. So there is a control module usually in these autonomous vehicles that then takes the inputs from the computer vision module and makes some control decisions about whether to start with it or to go on ahead, et cetera. The research in our group, so you asked the question about vulnerability, so our focus, as Prateek already mentioned, has mainly been on the vulnerabilities of the computer vision systems. So we say, what happens when the computer vision system, it’s identified these objects within these boxes, but what if there is some sort of corruption that has been added to these objects by an adversary? So, as Prateek mentioned, so think of a restaurant sign. So now, the computer vision module is able to identify that this is a sign of some kind, but instead of identifying it as a restaurant sign that it should ignore, it now thinks, “Oh, this is a stop sign,” because we added a perturbation to it to make it think that way.

Now, it’s suddenly going to stop in a place where it’s not supposed to stop. So this is the kind of vulnerability you can induce. Another kind of vulnerability that we looked at was inducing incorrect segmentation. When a frame of a video or an image is being segmented, instead of recognizing the pedestrian that I described earlier as a pedestrian, the computer vision module is going to think that pedestrian is actually part of the background and where you don’t have to stop when you see something that’s in the background. So it’s going to tell the control module, “Well, there’s no need to stop, so I can just keep on going ahead,” and that’s really dangerous. This can easily be done by putting perturbations on the side of the road, or even putting stickers on the backs of cars, which can make them… Or by putting them on pedestrians to make them meld into the environment. So this can cause a lot of issues for the way these computer vision systems of autonomous vehicles behave.

Aaron Nathans:

Well, Arjun, thank you very much. Let’s turn it over to Vikash. So Prateek was talking before about… He used the phrase out of distribution.

Vikash Sehwag:

Mm-hmm (affirmative)

Aaron Nathans:

What does that mean, and can you give us an example?

Vikash Sehwag:

Sure. So out of distribution in a machine learning system simply means the data points on which the machine learning system is not trained on. So it’s like in a very simple setting, say for example, a machine learning model could be simply classifying between dogs and cats. So what it is trained on? Only on these images of dogs and cats, and any concept beyond that is something it has no idea about. All images of every other possible object would be something we call as out of distribution, and maybe more advanced or more realistic examples could be a self-driving car where the (inaudible) is trained on understanding … it’s a road, it’s a lane, it’s a traffic light, it’s a human. So you understand these key concepts, but in a real world, there are a million of concepts and there are a million of different objects.

It has to develop an understanding of these core key concepts, which it understands and distinguishes between, and all of the possible real world objects, which it has not been trained on and it has to answer that, “These are the concepts, which I don’t understand,” or maybe we can also see an example of this in our smartphones. An iPhone, the face authentication is kind of trained on probability (of) recognizing the face of the user, but it’s also trained on not recognizing any other possible objects. So not only distinguish between… My iPhone doesn’t only recognize my face, but it also… Not even somebody else can unlock it. Even if I show it a mask or any other possible limits or object, it won’t get unlocked. So it has to develop an understanding of a capability to resect all auto distribution images.

Aaron Nathans:

Well, thank you. Liwei, are you there?

Liwei Song:

Hi, Aaron. I am here. Thanks for the podcast.

Aaron Nathans:

Thanks for being here. So can you talk to us a little bit about what privacy attacks are, and what’s the difference compared to security attacks?

Liwei Song:

Sure. So normally in security attacks, the goal of the adversary is to cause the wrong predictions to the machine learning model. For example, as Arjun just mentioned before, the adversary could just make the traffic sign recognition system run a classify as a restaurant as a stop sign. So this is the security attacks. Whereas in the privacy attacks, the goal of the adversary is to extract sensitive information about the training data from the target machine learning models. So for example, given a machine learning model trained on a hospital’s patient data, the adversary could know why the person was a patient in that hospital or not by querying the machine learning model. Given a face recognition model, the adversary could reconstruct a photo of a person by only knowing his or her name and query the face recognition model.

Aaron Nathans:

Are we already seeing privacy attacks?

Liwei Song:

From what I know so far, there (are) no concrete privacy attacks against the machine learning models where they are adopted in the real world. Five of the researchers have already shown the possibilities of performing such practical privacy attacks against the machine learning models. Nowadays, many major internet companies like Google, Amazon, they do provide machine learning as a service on their cloud platforms. Machine learning as a service, you just upload the training data then the platform automatically trains a machine learning model based on the optimal data. And finally, they just query the model for the prediction after the test of time. So in fact, researchers have already performed very successful privacy attacks on the machine learning as a service platforms. So they showed that by querying a model from these cloud platforms, which is trained on location datasets, they can actually know whether a person has visited a certain place or not.

Aaron Nathans:

Prateek, when it comes to protecting against adversarial AI attacks, is there a tradeoff between security and privacy beyond what we’ve already discussed here today mean? Can you have both security and privacy when it comes to AI?

Prateek Mittal:

Indeed, Aaron. I think there are some fundamental tradeoffs between security and privacy. Let me summarize three examples that Arjun and Liwei have brought up in varying contexts. So as Arjun mentioned earlier today, companies like Google and Apple are deploying a new privacy enhancing technology called federated machine learning, in which instead of users directly providing their data to Google and Apple, which would lead to compromise of their privacy, machine learning is being performed first on users’ data and this has been performed locally. Then the (inaudible) models are being sent to entities running federated learning like Google and Apple.

However, our work with Arjun has shown that this privacy enhancing technology is vulnerable to a new type of attack in which an adversary can send a compromised model to these entities that are performing federated learning. So this was the first example. The second example pertains to Liwei’s work, which has shown that approaches that mitigate the threat of adversarial examples in machine learning actually become more vulnerable from a privacy perspective. So think, if machine learning is the software of the future, then we are at the very basic starting point for even individually understanding how to make it secure or individually understanding how to make it more private. The grand challenge in the field is to achieve the vision of trustworthy machine learning that can simultaneously achieve both security and privacy.

Aaron Nathans:

All right. Well, that’s a good note to end on. Thank you, Prateek. That’s Prateek Mittal, associate professor of electrical engineering at Princeton. I appreciate you being with us today. His team of grad students are Arjun Bhagoji, Liwei Song, and Vikash Sehwag. Thank you all for being here today.

Dan Kearns is our audio engineer at the Princeton Broadcast Center. Cookies is a production of the Princeton University School of Engineering and Applied Science. This podcast is available on iTunes and Stitcher. Show notes are available at our website, engineering.princeton.edu. The views expressed on this podcast do not necessarily reflect those of Princeton University. I’m Aaron Nathans, digital media editor at Princeton Engineering. Watch your feed for another episode of Cookies soon when we’ll discuss another aspect of tech security and privacy. Thanks for listening. Peace.