When the Computer Center opened along with the Engineering Quadrangle at Princeton in 1962, who knew that the Music Department would be one of its biggest users?

The composers were there at all hours, punching their cards and running huge jobs overnight on the room-sized, silent IBM 7090.

With the help of Princeton engineers, they were helping light the spark of the digital music revolution.

Working without the ability to hear what they were creating, listening only to the music in their minds, these classical music composers managed to synthesize some of the trippiest music you’ll ever hear. But it was also the sound of progress, as they broke new ground in how digital music is created. Some of their advances live on to this day in music synthesis software.

Much of the music you’ll hear on this episode was created by James K. Randall, the Princeton music professor who is credited with showing the computer’s early promise for creating nuanced music.

List of historical music played in this episode:

“The Silver Scale,” Newman Guttman

“Bicycle Built for Two,” Max Matthews

“Quartets in Pairs,” James K. Randall

“Mudgett: Monologues by a Mass Murderer,” James K. Randall

“Lyric Variations for Violin and Computer,” James K. Randall

“Music for the Film, ‘Eakins,’” James K. Randall

“Computer Variations,” Hubert Howe

“Changes,” Charles Dodge

Sources (other than interviews) :

“Soft Sound: A Guide to the Tools and Techniques of Software Synthesis,” Quinn Powell, California State University, Monterey Bay.

“Interview with Max Mathews,” C. Roads, Computer Music Journal, Winter 1980

Transcript:

Aaron Nathans:

In 1962, with the United States and the Soviet Union locked in the space race, a brand new tool arrived on the Princeton University campus. An IBM model 7090, the state of the art in computing, became a centerpiece of Princeton’s gleaming new Engineering Quadrangle. It was the campus’ first true centralized Computer Center.

Aaron Nathans:

Demand for time on the new machine was high from across the campus, so high in fact, that the center’s managers took pains to keep track. Those around at the time recall that Princeton entities with government contracts, such as Astrophysics and the Plasma Physics Lab, were among the biggest users of computing time overall. But the single biggest non-sponsored departmental user of the computer wasn’t engineering, and it wasn’t mathematics, or statistics, or chemistry, or biology. It was, by a mile, the Music Department.

Aaron Nathans:

So why did these musicians, used to working with violins, and pianos, and clarinets, and vocalists, lay eyes on this hulking, slow, primitive, musically unproven silent machine, and have the audacity to say, “I’m going to make it sing”? Today’s episode is that story.

Aaron Nathans:

From the School of Engineering and Applied Science at Princeton University, this is Composers and Computers, a podcast about the amazing things that can happen when artists and engineers collaborate. I’m Aaron Nathans.

Aaron Nathans:

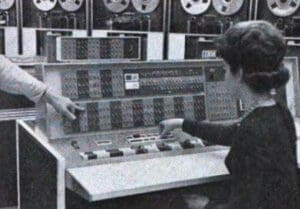

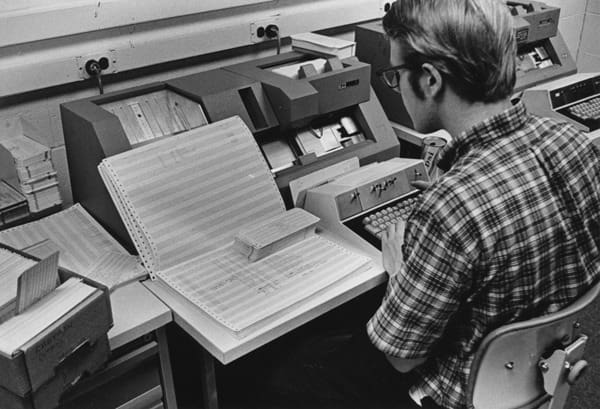

Part Two: Composers in the Computer Center. It was pretty rare to have a computer in the early 1960s, even for a university. You had to go to places like NASA Headquarters to find one. Now there was a brand new IBM 7090 on Princeton’s campus. Powerful as it was for its day, its memory could fit maybe a few chapters of a book. Its equipment had a massive footprint. It was spread out over three rooms. Instead of typing your program in, you went to a keyboard that created a series of punch cards, which gave the computer its instructions. You’d feed those cards into a reader and then wait hours, sometimes a day, for your printout to be ready.

Aaron Nathans:

The Computer Center opened along with the sprawling new Engineering Quadrangle during the scientifically heady days of John F. Kennedy’s administration. The size of the engineering complex was a signal of just how popular the field of Engineering had become. Just 50 years earlier, there hadn’t been an engineering school at all. Today, one out of four Princeton undergraduates is pursuing an engineering degree. There was plenty of room in the E-Quad for a new facility like the Computer Center, which took up a better part of a hallway.

Aaron Nathans:

The center was staffed largely by engineering students, and run by an engineering professor, Ed McCluskey. Its location in the E-Quad spoke volumes about whom its intended audience was. But intentions aside, the computer immediately caught the eye of the students and faculty of the Music Department.

Hubert Howe:

When I was there, I started working with Jim Randall, and he was doing research. And he had this problem of looking through various sets of notes and things, trying to pick out their properties and everything in it. And I thought, “That’s something that a computer could do.” And I had a couple of roommates who were programming computers at that point, so I went over to the Computer Center and I found that I could learn programming on my own there.

Aaron Nathans:

That’s Hubert Howe, Emeritus Professor of Music at Queens College in New York. He got his undergraduate degree in music from Princeton in 1964, and his doctorate here in 1972. His teachers included Milton Babbitt, whom we covered in the last episode of this series. Soon, James K. Randall, a young member of the faculty who had himself studied with Babbitt, found that he, too, was entranced with the computer and its possibilities.

Aaron Nathans:

Another music student soon joined them in the Computer Center in late 1962. Tobias Robison was, at the time, a first year graduate student composer. He was, like many were at the time, an aficionado of 12-tone music. He had trained on an IBM during the prior summer in New York. Toby Robison.

Toby Robison:

I was in the Music Department, and people knew very quickly that I had some knowledge of computers. So they were asking me, “What can you do?”

Aaron Nathans:

And so a few researchers ran programs on the IBM to better understand the structure of music, like Bach cantatas. But one day, Toby Robison was handed a box with a tape and an instruction manual. Inside that box was the software that promised to make the computer not just analyze music, but actually create it. The tape was courtesy of a fellow named Max Mathews. By the mid 1960s, Mathews would have a formal tie to Princeton. He was a member of the Music Department’s Advisory Committee.

Aaron Nathans:

But at this point in roughly 1963, that hadn’t happened yet. Mathews was a high ranking engineer at Bell Labs in Murray Hill, New Jersey. He also just happened to be a music lover. Bell Labs was a bustling center of innovation. Mathews’ boss was John Pierce, who invented satellite communication. Pierce had a lot of clout and gave Mathews lots of leeway. Mathews knew the possibilities for the lab’s IBM mainframe computer. And in 1957, he coaxed the machine to make crude musical sounds for a 17 second piece, written by a colleague, dubbed The Silver Scale. Hubert Howe.

Hubert Howe:

Computer music was sort of invented by Max Mathews at Bell Labs, who had a high enough position in the hierarchy of people at Bell that he could put money into this research. And he saw that this was possible, and nobody else had ever thought of generating music by creating the wave. Max Mathews’ musical ideas were not very sophisticated. He played the violin, and he was interested in music in some serious way.

Aaron Nathans:

Here’s Jeff Snyder, present day lecturer in music at Princeton.

Jeff Snyder:

In the ’60s, the first computer voice example is Max Mathews makes a computer sing “Daisy, Daisy,” the bicycle built for two. And that’s the earliest computer speech, which is actually also computer singing, which is an interesting example. <<MUSIC>>

Aaron Nathans:

Mathews was able to get the computer to create a sound by building software he called simply MUSIC, all caps. MUSIC became the first computer program to be widely used for sound generation. It worked by creating digital audio waveforms using direct synthesis. Here’s Rebecca Fiebrink, a professor at the University of Arts, London, and who received her PhD in Computer Science from Princeton in 2011.

Rebecca Fiebrink:

In this context, I would say direct synthesis … If you are Max Mathews and working many decades ago, wanting to synthesize sound on a computer, one of the most straightforward ways of doing that is the equivalent of taking a pen and drawing a wave, drawing a shape that repeats on a piece of paper. So you can imagine you take your pen and you go up to the right, and then down to the right, and you’ve got the top part of a triangle. And then you keep doing that, right?

Rebecca Fiebrink:

If you do that, but instead of having your pen going up and down, you are pushing a speaker cone in and out, in that same triangle shape, that’ll make a sound at certain frequencies, and it’s going to be a buzzy sound, and it’s going to be a pitch that you could sing, like “da.” Right? So that would be a simple way to synthesize a sound. A slightly more complicated, but not too much more complicated way of synthesizing a sound, would be to draw a sine wave. So, a very smooth line that goes up, and then down, and then up, and then a down. If you make your speaker cone move with that shape, you’re going to hear a very pure sound. Again, it’s going to be something that you can sing at a pitch like “da.” It’s going to sound a little bit like a tuning fork, after you’ve hit a tuning fork, if you know what that sounds like.

Aaron Nathans:

The first version of Mathews’ software made sound by using a triangle wave, a bit of a harsh sound. But it was mostly just a way to show that a computer could make sound at all. <<TRIANGLE WAVE SOUND>> The next year in 1958, he released the second generation of the MUSIC program, which offered 16 different wave forms created by oscillators. He manipulated these wave forms by oscillating them, varying the voltage and the amount of time he supplied that voltage. Those wave forms included the sine wave, those smooth up and down curves that make a smoother sound. <<SINE WAVE SOUND>>

Rebecca Fiebrink:

The computer itself, computationally this is far from the most complicated thing that a computer had to do at that point in time. Right? But it took somebody with an interest in sound, realizing, “Oh, I could do this thing with a computer.”

Aaron Nathans:

This is composer Mark Zuckerman.

Mark Zuckerman:

Yeah. Essentially, what the computer programs did was model a synthesizer. I mean you had oscillators, filters, noise generators, and other such, and you would mix them all together to produce a stream of numbers that would be a waveform that you could convert.

Aaron Nathans:

It was likely Matthews’ third generation program, MUSIC III, that found its way to Princeton and into the hands of young Toby Robison, a student of professor Lewis Lockwood in 1963. Howe thinks it was given to him by James Randall. Toby Robison.

Toby Robison:

I think it was probably Lewis Lockwood, although it could have been Randall, who said one day, “Hey, we’ve got this software that Max Mathews wants to share with us. Can you install it?” And so I did. And later on, we got the better version. It came with 100 pages of documentation. I looked at it and I said, “Oh my God.” I create sine waves. I make these sample beds. And then I run them through filters. I can make them as loud or as soft as I want. I can add them together. I can have a sub routine that picks pitches out of these beds. I can just imagine these things filtering and filtering to each other. And it was really tremendously exciting. So, you installed it and you started and playing with it.

Aaron Nathans:

The composers entered their punched cards to activate the MUSIC III program on the IBM 7090. After they waited hours for their job to be run, they would be handed a role of magnetic tape.

Paul Lansky:

You’d submit your job, and then you might have to come back the next day. So it depends on what the backup was like. It was insane.

Aaron Nathans:

That’s Paul Lansky, who was a young composer who arrived at Princeton in 1966. Today, he’s the William Shubael Conant Professor Emeritus. After composers picked up their digital tape from the ready room at the Computer Center, they weren’t nearly done. That’s because the music was still all zeros and ones. Numbers represent sound, but they don’t make a sound. Which is to say, this music was digital.

Aaron Nathans:

The human ear only hears music in analog form. And so, in order to be heard, the music needed to be converted from digital to analog. Any device that plays music today will do that for you, but that’s something that the IBM in the Computer Center wasn’t equipped to do.

Interviewer:

So you’d go through all that and you still wouldn’t have music or even sound?

Paul Lansky:

That’s right. It was very frustrating. I would make an appointment at Bell Labs and drive up there. It was 40 miles through central to New Jersey. And when you get to Bell Labs, you have to call Max Matthews’ office to get somebody to send somebody down to walk you to the conversion lab. So you weren’t allowed to walk around Bell Labs by yourself. You might steal intellectual property. (laugh) And you’d put your digital tape on the digital tape drive. You’d have somebody do it for you, and then you’d put a magnetic tape on the tape recorder and record the results of your job. And then you’d walk back to your car sick to your stomach because it was so awful.

Interviewer:

What was awful about it?

Paul Lansky:

It sounded awful. (laugh)

Aaron Nathans:

But Robison said the frustration was worth it.

Toby Robison:

It was frustrating, but you have to bear in mind that when we got results, they were so exciting. We were doing something that had never been done before. Hadn’t even been imagined a few years before, and it was so exciting to be doing it. It just made it seem worthwhile. And not everybody had to make those drives. If several people were creating tapes, someone would take a whole bunch and bring them back again.

Aaron Nathans:

Here’s Ge Wang, a computer music entrepreneur who received his doctorate in computer science from Princeton in 2008.

Ge Wang:

That’s what they had to work with because that was the state of the art. But I think that the allure, the freedom of the computer to create all these fantastical sounds and automations really was a more than good enough reason to go work with this fairly unwieldy kind of a workflow, because that’s sound that you can’t really get through any other means.

Aaron Nathans:

Soon, a new version of the music program arrived from Bell Labs, MUSIC IV, created by Mathews and Joan Miller. Robison installed that one, and he was impressed with how much easier it was to use, and how simple it was to manipulate sound. Toby Robison.

Toby Robison:

Now, maybe you didn’t want sine wave. Maybe you wanted a sawtooth wave. <<SAWTOOTH WAVE SOUND>> Something that went up and then bounced down again, and went up and bounced down, because you’re making a clarinet sound. Then you would have a sawtooth wave generator. And then you would ask that generator, “Give me the following pitches, one after another.” So one thing that you did that was very basic in MUSIC IV was you created a sine wave or some other kind of wave that you were going to use in your music, and then you called on it to get pitches.

Aaron Nathans:

This is “Quartets in Pairs” by Jim Randall. It’s one of the earliest surviving recordings from the Computer Center in the E-Quad. It was created using Max Mathews’ MUSIC IV software. <<MUSIC>>

The computer was soon upgraded to an IBM 7094 in 1964 by adding more memory and replacing some components. But two of Robison’s fellow composers thought Max Mathews’ MUSIC software could use an upgrade, as well. Hubert Howe said MUSIC IV was written by engineers, not composers. He said it was effective, but not terribly intuitive, if your goal was to compose music, for instance, if you wanted to play a sound, you didn’t use the program to select a musical note, instead you chose a frequency.

Aaron Nathans:

And so Howe and Godfrey Winham, Princeton’s first Ph.D. recipient in music composition, who had recently joined the faculty, dug into a yearlong project to improve upon Max Matthews’ software. Beyond just changing the interface, they also expanded the number of unit generators, the digital building blocks of sound, to be able to produce more sounds more easily.

Aaron Nathans:

Some of the innovations they created live on in music programs that composers still use today. Godfrey Winham was a young Englishman, and a devotee of Milton Babbitt 12-tone serialist approach. Winham was the only one of this crew to have worked with Babbitt at the RCA Mark II Synthesizer in New York. Here’s Hubert Howe, talking about his work with Winham on the new computer program at the Computer Center.

Hubert Howe:

There were a couple of desks in there, and Godfrey and I could sit there and talk. And by the way, we did our best work from about 10:00 to 3:00 in the morning. We took the framework that was developed by Max Mathews, which was very workable, but very cumbersome.

Hubert Howe:

For example in MUSIC IV, there was no way to specify pitch. And we developed something called octave point pitch class form, where you have the integer part of the note tells the octave and the decimal tells the pitch class. So if you want C sharp above middle C would be 8.01, C would be zero, B would be 11.11, and then 9.00 would be the next octave.

Aaron Nathans:

The new program was written in the Macro Assembler language BFAP, hence the name of the updated program, MUSIC IV B. Paul Lansky.

Paul Lansky:

You communicated with the computer one line at a time. And with the BFAP program, there would be part of what you’d write out would be the code. Would be an oscillator leading into an envelope generator, leading to an outbox, and then you’d have data. So you have program code and data code and data would be start at times zero with this pitch, this loudness using this instrument. So you’d name your instruments in BFAP. And I remember being frustrated by it and not finding it very satisfying, but when you had people like Jim Randall who would write this sensational music, it was reason to keep going.

Aaron Nathans:

We’ll be right back with more of “Composers and Computers.” <<THEME MUSIC, INTERMISSION>> At its heart, this podcast is a story about interdisciplinary research, and here at the Princeton University School of Engineering and Applied Science, interdisciplinary work is part of who we are.

Aaron Nathans:

We have a wide array of initiatives that cut across disciplines, including bioengineering, quantum computing, robotics, smart cities, data science, and yes, engineering in the arts. You can keep track of all the exciting things happening here by following us on social media. If you’re on Twitter or Instagram, you can follow us @EPrinceton. That’s the letter E, Princeton. You can also find us as Princeton Engineering on Facebook, LinkedIn and YouTube.

Aaron Nathans:

We’re halfway through the second of five episodes of this podcast, “Composers and Computers.” On our next episode, we’ll move into the late 1960s and early to mid 1970s as Princeton engineers and composers work together to not just compose music on a computer, but to try to hear it too – it’s harder than it sounds. Let’s not get ahead of ourselves, though. Here’s the second half of part two of “Composers and Computers.” <<END OF INTERMISSION>>

Aaron Nathans:

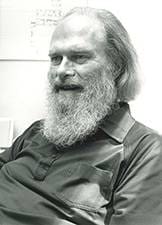

James Randall earned an MFA in music composition from Princeton in 1958, which included studying piano with Milton Babbitt before joining him on the faculty that year. Like Babbitt, Jim Randall was a big personality, and you didn’t want to get on his bad side. Seth Cluett of Columbia, who studied at Princeton, said he has vivid memories of J.K. Randall.

Seth Cluett:

I mean, J.K. is brilliant and was brilliant, and constantly innovating and pushing people to innovate. And he’s unsung in the history of Princeton Music in so far as… I mean, he’s famous as a Princetonian. I remember him holding traffic back to allow deer to cross on Harrison. It was one of these things where, this guy with a big beard would be out in the middle of the street, holding his hands out so that deer could cross safely. Really interesting character. But he’s the open-minded person who was creating an environment of curiosity and experimentation.

Aaron Nathans:

Howe described Randall as a rigorous thinker who was working on serious new music when they met. Howe described him as an excellent pianist.

Hubert Howe:

Randall is the great anti-Babbitt. Even though he was very close to Babbitt, admired him very much. Babbitt was a strict serialist. And he had a whole bunch of followers that did the same thing that he did. Randall was not, he was trying to forge his own original directions. And he was successful at doing that.

Aaron Nathans:

Ken Steiglitz, now a Professor Emeritus of Computer Science, was a young faculty member at the time. He remembered Randall coming to him with a problem. The computer was playing a note, but Randall said the pitch was just the slightest bit off. Ken Steiglitz.

Ken Steiglitz:

So, Jim Randall kept coming by and saying, “You know, there’s something a little wrong.” And I checked the code and everyone else would listen to it and it sounded fine. And it turns out that Jim Randall’s hearing was super human. And he was able to detect the error in the pitch that had gone unnoticed maybe for all eternity, essentially.

Ken Steiglitz:

And I was amazed by Jim Randall’s ear. I think he was well known for having a superhuman perception. But I provided the correct… In fact, it took a fair amount of work to work out the exact formula, which differed by a very small amount. It doesn’t happen all the time, but in certain situations the difference in pitch between the approximation and the exact long complicated formula, is perceptible by some people. And so Jim was vindicated. He was amazing. The people who did this work were a special breed.

Aaron Nathans:

Jim Randall is well known in computer music circles, for creating works that showed early on what a computer was capable of doing in the right musical hands. This is his 1965 work, “Mudgett: Monologues by a Mass Murderer.” <<MUSIC>> Randall premiered at Tanglewood in Western Massachusetts that summer. Hubert Howe.

Hubert Howe:

Mudgett is all about this stuff, something that he found in the newspapers in Philadelphia from the turn of the century, actually it was the 1890s, this guy, Herman Webster Mudgett, who was a mass murderer in Chicago around the time of the world’s fair out there. And there’s this long string of unsolved murders. And the only way they got him was that he had contracted with this insurance agent to take out a life insurance policy, and then a body would be produced and he would collect money. And of course the body who was produced was the insurance agent.

Hubert Howe:

But he had thought that he was onto this somehow. And they caught up with him at that a point, but they had no idea how many people he actually killed. And the piece was very interesting. I mean, you couldn’t always see the connection to this story, but that was what inspired him. I think I actually was the person who spliced Mudgett together for him. Came back from Bell Labs with a couple of clean copies and I put it together.

Aaron Nathans:

And then there was Randall’s piece “Lyric Variations for Violin and Computer” released by Vanguard Records in 1969. <<MUSIC>> Paul Lansky.

Paul Lansky:

There was a young faculty member named Jim Randall, who was working on a great big piece at that time called “Lyric Variations for Violin and Computer.” And it was with Paul Zukofsky playing the violin. Paul was a well-known new music person. And I was blown away by what Jim had done. He had a famous five minutes. I think you can still find this recording around. He had five minutes, it took six hours on the mainframe. And it was the only thing the mainframe was doing at the time. So it was quite impressive. Oh, it just sounded like a new world. It was a way of conceiving a musical space that was different. And it was 20 variations, each was exactly one minute long and they were threaded together. So it used up 20 minutes in a fascinating way.

Aaron Nathans:

Mark Zuckerman.

Mark Zuckerman:

I mean, we called the program that was generating this stuff an orchestra. He invented an orchestra that had distinctive qualities that picked up from the violin and also complimented the violin. So it was a way of being able to hear orchestration in a new and very convincing way. And all of the stuff that he did, most of his music had the kind of very dreamy quality to it where everything was very sustained and a computer is ideal for that kind of thing.

Aaron Nathans:

Here’s Mark Zuckerman on Randall’s last known computer piece.

Mark Zuckerman:

His score for the PBS documentary on Thomas Eakins is just gorgeous. <<MUSIC>> I mean, it unfolds so slowly and all of the… Has this huge shimmering effect, which he concentrated on getting things where the attack transients were a lot of information in them.

Aaron Nathans:

James Randall, successful as he was at computer music, didn’t stay at it for long. By the early 1970s, he had pivoted back to traditional instruments, and there was a period of time where he stepped away from making music altogether. Hubert Howe says that was a shame.

Hubert Howe:

And I think he was also disillusioned with the music world, which is after all, you can’t make a lot of money. You don’t get very much prestige or respect, there other things like that. He was very much… Well, for example, grew this big beard, which he had for many years. He didn’t have it when I first met him, but then he grew it and it stayed there. He looked like a bum in some ways. Just because of that and the way he dressed, he never would dress up. I don’t think I ever saw him wear a tie.

Aaron Nathans:

Other than Jim Randall’s, there’s not a lot of music created in the Computer Center from this era that survives today. Some of it exists on 60-year-old magnetic tape, which has degraded over the years.

Aaron Nathans:

Toby Robison has a piece of his own sitting in the closet and he dares not play it. He calls it Haiku Pieces, with 17 notes in each piece. One of the few surviving pieces is by Hubert Howe — he goes by the nickname Tuck. “Computer Variations” is what Howe calls his first acknowledged composition. <<MUSIC>> By 1972, when he graduated from Princeton, he was already on the faculty at Queens College in New York. He would stay there his whole career, continuing to make computer music, which he still does today.

Aaron Nathans:

There’s also a surviving piece from Charles Dodge. <<MUSIC>> He had been a Columbia grad student who made good use of the Princeton Computer Center during this time, because he wanted to make music on a computer and Columbia didn’t offer that. He would spend a year as an instructor at Princeton starting in 1969. Dodge finished his first computer piece in 1967, a five-minute piece, at least at the time. He’d eventually expand it (into the finished piece “Changes”). He made a tape of it and brought it to Greenwich village for its world premiere at a concert. Charles Dodge.

Charles Dodge:

It was a chamber music concert. So there were players on the stage, except for my piece. It was just one loud speaker on the stage. And there was a pretty well known, mostly piano teacher from New York, who was the teacher of a number of pianists I knew. Maybe, he was originally from Brazil. He wore a cape and a beret looked quite like a stock character from Greenwich Village. When my five minute piece was over while he wadded up his program, he stalked to the stage and threw it into the loud speaker.

Aaron Nathans:

This, not surprisingly, devastated Dodge.

Charles Dodge:

Well, he was protesting both the technology, I think, and the effect that the music had on him, which apparently didn’t please him.

Aaron Nathans:

But the experience didn’t deter Dodge, who had the support of more than just his fellow composers. The engineers at Princeton, the students, faculty, and staff of the Computer Center also had his back. Although the composers knew the software very well, only the Computer Center workers could really dig into the operating system. And the composers definitely needed help when the computer itself kept changing. In 1967, the Computer Center swapped out the IBM 7094 for the next model up the IBM 360/67. Although they didn’t know it at the time, this operating system and hardware platform would endure, forming the template for IBM computers that continue even to this day.

Aaron Nathans:

Robison recalled working with engineers like Roald Buehler, who was director of the Computer Center from 1966 to 1970. He also worked with Lee Varian. Varian was one of the main workers at the Computer Center. He would eventually become the university’s information technology architect and spent years before that as systems manager.

Aaron Nathans:

Lee Varian got his undergraduate and master’s degrees in Electrical Engineering at Princeton in 1963 in 1966 respectively. So at the time the Computer Center opened in the E-Quad, he was an undergraduate working there as a systems programmer. Toby Robison.

Toby Robison:

He was a cool guy. He just understood programming very deeply. He was the guy I would go to for really difficult problems.

Aaron Nathans:

Varian had taken an undergraduate course in Computer Analysis with the legendary computer pioneer Forman Acton, which introduced him to the early Computer Center at Gauss House. A little Victorian house near where Thomas Sweet is today, in Princeton. Varian said he was surprised when he first noticed composers working in the Computer Center. He said his first memory of musicians there was Paul Lansky, and soon after Jim Randall. Lee Varian.

Interviewer:

Were you surprised to see a composer there?

Lee Varian:

Yes, the first time I ran into him, I was astonished to find out what he was working on. But it’s one of those things. If you can figure out how to make it into a computer program, you can sit there and run it.

Aaron Nathans:

Varian said the Computer Center was a community. There were regulars, both workers and users who spent considerable time there, Varian included. People knew each other by sight, and they fed off each others’ suggestions and went to each other for help. Varian said that because his future wife was on campus too, he was in no rush to get home, and regularly logged 12 hour days there, often six or seven days a week.

Lee Varian:

Part of it was fun.

Interviewer:

What was fun about it?

Lee Varian:

Just the challenge of trying to keep the systems functioning well, and up to date.

Aaron Nathans:

Hubert Howe.

Hubert Howe:

I don’t know that there are many places where somebody in music like that, could go in and use that. They wouldn’t think that computer is for that. And once I there and doing stuff, it was no problem. Like once people knew about it, everybody accepted it.

Aaron Nathans:

Lee Varian.

Lee Varian:

I mean, Princeton has always tried to be good at a few things rather than good at everything, because they’re just not big enough to do that. And this was obviously something that was very important to the music department, to the extent that they supported faculty who had that interest, in ways that many other music departments probably weren’t able to. I mean, it did sound like both Princeton and Columbia were heavily committed to computer music in that era. And I think they were probably among the very few colleges around the country that were doing that at that point.

Aaron Nathans:

Hubert Howe.

Hubert Howe:

This is one of the great things about that Computer Center in those days, it brought together everybody. I was in music, but there were other people in the humanities that were doing stuff. There were all people in sciences and engineering and they all had to come to that place. And we had all had to use the same key punch machines and everything. And there were people that when I had a program that pressed or something, I could take it to people and get help.

Aaron Nathans:

Toby Robison eventually left the Music Department and went on to have a career as a computer programmer. He said his time at Princeton helped him learn an enormous amount about programming. He still lives in Princeton today. And while he still enjoys atonal music, he has come around to romantic music as well. He says there’s still plenty of new things to say with romantic music, even if he isn’t the one saying them.

Aaron Nathans:

His most profound memory of the Computer Center days was watching a composer work, trying to get the computer to create music with a sense of feeling, that elusive bit of humanity, which he said still bedevils musicians today, as they try to coax feeling out of a machine. Toby Robison.

Toby Robison:

When I was 13 years old and not yet reached puberty, I was a clarinetist. And my teacher was trying to explain to me how to play a piece of music with expression. And he would put little notes on notes and say, “This one should be a little louder. This should be a little softer.”

Toby Robison:

And years later, looking at those marks he made, I realized what he was really trying to get me to do was to emote. But at that age I couldn’t understand. He couldn’t communicate it to me. But he could get me to come close to it with these marks. So, hard to teach a young child to emote either they do or they don’t.

Toby Robison:

How do you teach a computer to emote? This was surely one of the most interesting questions we had in the 1960s. And we had one composer there who was really trying to tackle it. And I really can’t remember his name. Don’t know what happened to the music.

Interviewer:

Do you remember what methods he used to try to get it to emote?

Toby Robison:

Well, the really important thing is you’ve got to be very careful not to have notes appearing in very regular order. You think of drummers playing very precisely. You think of other musicians playing notes, very precisely. But in fact, a human being if you look at an analysis, classical music, if you’re looking at an audio program that will show you the layout of exactly what’s happening with the up and down and the frequencies and so on, you’ll see that there are lots of little tiny variations and those little tiny variations are where the expression comes from.

Toby Robison:

If you want to try to do that with a computer, the tempting thing to do is to find some way to randomize how far apart the notes are. But that’s not what an accomplished musician does. An accomplished musician is really in control of what they’re doing and you have to figure out what do they do? What can I do that adds a little bit to the notes coming before, after the beat, a little bit to the notes being louder or softer, that conveys patterns of emotion. It’s a very interesting problem.

Aaron Nathans:

In our next episode, we’ll follow the Computer Center as it moves across the street into its own building. We’ll talk about how composer Godfrey Winham and engineer Ken Steiglitz worked on solving the composers’ biggest challenge, hearing the music they were creating without having to drive across New Jersey.

Aaron Nathans:

We’ll also listen to some trippy music that was produced during what might be described as the golden age of computer music at Princeton. This has been “Composers and Computers,” a production of the Princeton University’s School of Engineering and Applied Science. I’m Aaron Nathans, your host and producer of this podcast.

Aaron Nathans:

I conducted all the interviews. Our podcast assistant is Mirabelle Weinbach, who also created all the wave sounds we heard in this episode. Our audio engineer is Dan Kearns. Thanks to Dan Gallagher and the folks at the Mendel Music Library for collecting music for this podcast. Graphics are by Ashley Butera. And Steve Schultz is the director of communications at Princeton Engineering.

Aaron Nathans:

This podcast is available on iTunes, Spotify, Google Podcasts, Stitcher, and other platforms. Show notes, including a listing of music heard on this episode, sources, and an audio recording of this podcast are available at our website engineering.princeton.edu. If you get a chance, please leave a review, it helps. The views expressed on this podcast do not necessarily reflect those of Princeton University. Our next episode should be in your feed soon. Peace.